What Are AI Agents? Definition, Types and Real Examples 2026

Quick Summary: AI agents are autonomous software systems powered by artificial intelligence that can perceive their environment, make decisions, and take actions to achieve specific goals without constant human oversight. Unlike traditional chatbots or automation tools, AI agents use reasoning, planning, memory, and tool integration to handle complex, multi-step tasks across diverse domains—from customer service to scientific research.

Artificial intelligence has moved beyond simple question-and-answer systems. The latest evolution brings us AI agents—software that doesn't just respond to prompts but actively pursues goals, makes decisions, and adapts its approach based on feedback.

But what exactly qualifies as an AI agent? The term gets thrown around a lot these days, often slapped onto products that are really just chatbots with extra steps. Understanding the real capabilities—and limitations—of AI agents matters if you're evaluating them for business use or just trying to make sense of the hype.

Here's the thing though—AI agents represent a fundamental shift in how we interact with intelligent systems. They're not waiting for your next instruction. They're planning several steps ahead, using available tools, and working toward outcomes you've defined.

Defining AI Agents: What Makes Them Different

An AI agent is a software system that autonomously performs tasks by designing workflows with available tools. According to research published on arXiv, these systems combine foundation models with reasoning, planning, memory, and tool use to create a practical interface between natural-language intent and real-world computation.

The key word? Autonomous. These systems can operate with minimal human intervention once given a goal.

AI agents differ from standard AI applications in several fundamental ways. They possess agency—the capacity to make decisions and take actions independently. They demonstrate reasoning capabilities, breaking down complex problems into manageable steps. And they maintain memory, learning from previous interactions to improve future performance.

Core Characteristics That Define AI Agents

Real AI agents share four essential capabilities that separate them from simpler automation tools:

- Perception: They gather information from their environment through various inputs—text, images, sensor data, or system states.

- Reasoning and Planning: They analyze situations, evaluate options, and develop multi-step strategies to achieve goals.

- Action: They execute tasks using available tools, APIs, or interfaces without waiting for human approval at each step.

- Learning and Adaptation: They improve performance over time by adjusting strategies based on outcomes and feedback.

These capabilities work together. An AI agent might perceive that a customer inquiry requires information from three different databases, reason about the most efficient retrieval sequence, execute those queries, and learn which approach worked fastest for similar future requests.

How AI Agents Work: Architecture and Components

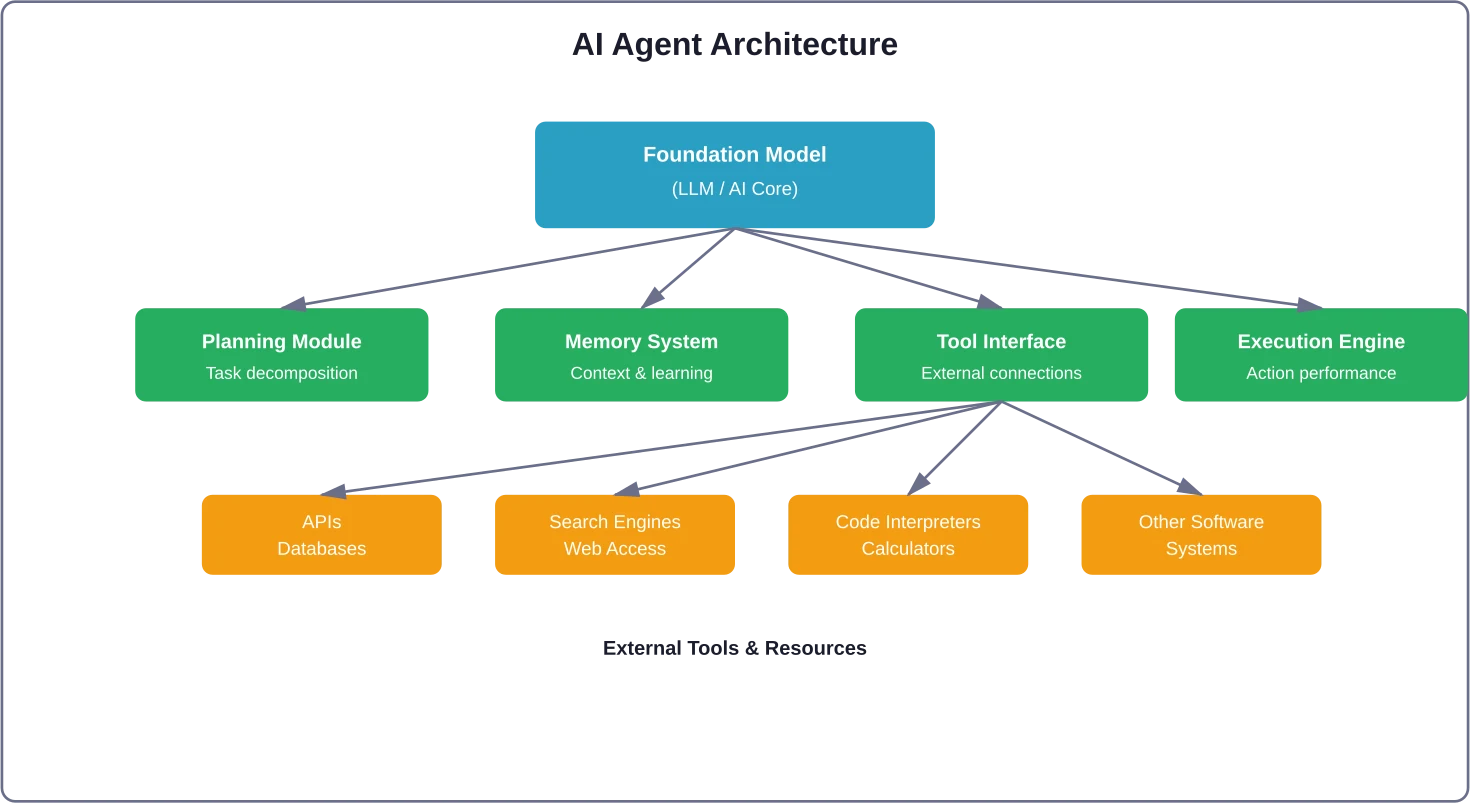

Understanding the internal structure helps clarify what makes these systems tick. According to research from Arizona State University, AI agent systems typically consist of several interconnected components that enable autonomous operation.

At the foundation sits a large language model or other AI foundation model. This provides the core intelligence—understanding natural language, generating responses, and performing basic reasoning tasks.

Built on top of that foundation are specialized modules:

- The Planning Module breaks high-level goals into executable subtasks. If you tell an agent to "prepare a market analysis report," the planner determines it needs to gather competitor data, analyze pricing trends, synthesize findings, and format results—in that order.

- The Memory System maintains both short-term context (what's happening in the current task) and long-term knowledge (patterns learned from previous tasks). This dual memory structure lets agents maintain coherence across extended interactions while applying lessons from past experiences.

- The Tool Interface connects the agent to external resources. These might include search engines, databases, APIs, calculators, code interpreters, or other software systems. The agent determines which tools it needs and orchestrates their use to accomplish objectives.

- The Execution Engine actually carries out planned actions, monitoring results and adjusting the approach if something doesn't work as expected.

This modular architecture enables flexibility. Different agent systems emphasize different components based on their intended use. A customer service agent might prioritize memory and tool access, while a research agent might focus heavily on planning and reasoning capabilities.

AI Agents vs. AI Assistants vs. Bots: Key Differences

The terminology gets confusing fast. Marketing teams love calling everything an "agent" these days. But meaningful distinctions exist between AI agents, AI assistants, and traditional bots.

|

Characteristic |

AI Agent |

AI Assistant |

Bot |

|---|---|---|---|

|

Autonomy Level |

High - operates independently |

Medium - assists with human-directed tasks |

Low - follows pre-programmed scripts |

|

Decision Making |

Makes complex decisions autonomously |

Suggests options, human decides |

Rule-based, no real decisions |

|

Goal Orientation |

Pursues long-term objectives |

Completes individual tasks |

Responds to specific triggers |

|

Adaptation |

Learns and improves over time |

Limited personalization |

Static behavior patterns |

|

Planning Capability |

Multi-step strategy development |

Single-step task execution |

No planning, only reactions |

|

Tool Usage |

Dynamically selects and combines tools |

Uses tools as directed by user |

Fixed integrations only |

A traditional bot follows if-then logic. The user says "X," bot responds with "Y." An AI assistant like a basic chatbot understands natural language and can help with tasks, but it waits for your instructions at each step.

An AI agent? It takes a goal and figures out the path. Tell it "optimize our cloud infrastructure costs" and it might analyze current usage patterns, identify inefficiencies, research alternative configurations, and propose specific changes—all without asking what to do next at each decision point.

Types of AI Agents: From Simple to Sophisticated

AI agents exist on a spectrum of complexity and capability. Understanding these categories helps set realistic expectations about what different systems can actually do.

Simple Reflex Agents

The most basic type operates on condition-action rules. These agents perceive their immediate environment and respond based on pre-defined patterns. Think of a thermostat that turns on heating when temperature drops below a threshold.

These qualify as "agents" in the technical sense but lack the sophistication most people associate with AI agents today. They don't learn, don't plan, and can't handle situations outside their programmed responses.

Model-Based Reflex Agents

These agents maintain an internal model of their environment, letting them handle situations where not all relevant information is immediately visible. A chess-playing program that tracks board state and evaluates possible future positions represents this category.

They're smarter than simple reflex agents but still operate within fairly constrained domains using predetermined strategies.

Goal-Based Agents

Now we're getting somewhere. Goal-based agents work backward from desired outcomes, evaluating different action sequences to determine which best achieves their objectives. They can handle more flexible scenarios because they're not locked into specific response patterns—they're optimizing for results.

Navigation systems that calculate routes based on your destination exemplify this approach. The same agent might recommend different paths depending on traffic conditions, road closures, or your stated preferences.

Utility-Based Agents

These agents don't just pursue goals—they optimize for the best possible outcome among competing objectives. They assign values to different states and choose actions that maximize expected utility.

A utility-based agent handling customer support might balance response speed, answer quality, customer satisfaction, and operational cost, making trade-offs that achieve the best overall result rather than maximizing any single metric.

Learning Agents

The most sophisticated category. Learning agents improve their performance over time by analyzing outcomes and adjusting their behavior. They consist of four main components: a learning element that makes improvements, a performance element that selects actions, a critic that provides feedback on outcomes, and a problem generator that suggests exploratory actions.

Modern AI agents powered by large language models typically fall into this category. According to MIT Sloan research, these systems can perceive, reason, and act autonomously while continuously refining their approach based on experience.

Specialized Agent Categories by Function

Beyond these structural classifications, AI agents also get categorized by their specialized functions:

- Reactive Agents: Respond to immediate stimuli without maintaining historical context. They're fast but limited to situations where past events don't matter.

- Deliberative Agents: Maintain detailed world models and perform symbolic reasoning to make decisions. They excel at complex planning tasks but can be slower to respond.

- Hybrid Agents: Combine reactive and deliberative approaches, using fast reactive responses for routine situations while engaging deeper reasoning for complex problems.

- Collaborative Multi-Agent Systems: Involve multiple AI agents working together, each with specialized capabilities. According to research from the Tata Institute of Social Sciences, these systems represent a distinct paradigm from standalone agents, with coordination mechanisms that enable emergent collective intelligence.

Real-World Applications: Where AI Agents Actually Work

Theory is great. But what are organizations actually using AI agents for? The applications span nearly every industry, with varying levels of sophistication and success.

Customer Service and Support

This is probably the most visible application right now. AI agents handle customer inquiries by understanding questions, searching knowledge bases, accessing customer records, and providing personalized responses—all without human intervention for routine cases.

According to research published on arXiv, telecommunications company Vodafone implemented an AI agent-based support system that handles over 70% of customer inquiries without human intervention. The system significantly reduces operational costs while improving response times.

These agents don't just answer questions. They troubleshoot problems, process returns, update account information, and escalate complex issues to human agents when necessary—determining themselves when they've reached the limits of their capability.

Business Process Automation

AI agents automate complex workflows that previously required human judgment at multiple decision points. They might process invoices by extracting data, validating against purchase orders, flagging discrepancies, routing approvals, and updating financial systems.

The key difference from traditional robotic process automation? AI agents handle exceptions and variations without breaking. They adapt their approach when encountering unexpected invoice formats or missing information.

Data Analysis and Research

Research-focused AI agents gather information from multiple sources, synthesize findings, identify patterns, and generate insights. According to MIT Sloan research, in areas involving substantial evaluation efforts—like startup funding, college admissions, or B2B procurement—agents deliver value by reading reviews, analyzing metrics, and comparing attributes across a range of options.

Scientific research provides particularly compelling use cases. AI agents can design experiments, analyze results, identify promising research directions, and even generate hypotheses—though human researchers still provide oversight and make final decisions about research directions.

Software Development and DevOps

Developer-focused AI agents assist with code generation, bug detection, code review, testing, and deployment automation. They understand codebases, suggest improvements, identify security vulnerabilities, and even fix certain types of bugs autonomously.

DevOps agents monitor system health, detect anomalies, diagnose root causes, and implement fixes—sometimes resolving issues before users even notice them.

Personal Productivity and Assistance

Personal AI agents manage calendars, prioritize emails, draft responses, schedule meetings, and coordinate complex logistics. They learn individual preferences and work styles, becoming more effective over time.

The most sophisticated personal agents act as coordinators, interfacing with other AI systems and services to accomplish multi-step tasks like "plan a three-day conference in Chicago for 50 people" or "research and purchase the best standing desk under $800."

Healthcare and Medical Support

Medical AI agents assist with diagnosis by analyzing patient symptoms, medical history, and test results. They stay current with latest research, flag potential drug interactions, and suggest treatment options for physician review.

Administrative healthcare agents handle appointment scheduling, insurance verification, prior authorization requests, and patient follow-up—freeing medical staff to focus on direct patient care.

Key Benefits: Why Organizations Adopt AI Agents

The appeal isn't just theoretical. Organizations report tangible benefits from deploying AI agent systems:

- 24/7 Availability: AI agents don't sleep, take breaks, or go on vacation. They provide consistent service around the clock, particularly valuable for global operations serving customers across time zones.

- Scalability: Adding capacity doesn't require hiring and training new employees. An AI agent that handles 100 customer conversations simultaneously can handle 1,000 with minimal additional infrastructure cost.

- Consistency: AI agents apply policies and procedures uniformly. Every customer gets the same level of service quality, without variation based on agent mood, experience level, or workload stress.

- Cost Efficiency: While initial implementation requires investment, operational costs typically fall well below equivalent human labor costs for routine tasks—freeing human workers to focus on complex cases requiring empathy, creativity, or nuanced judgment.

- Speed: AI agents process information and execute tasks faster than humans for many operations. What might take a person hours of research and analysis can happen in seconds.

- Data-Driven Improvement: Every interaction generates data. Organizations can analyze agent performance, identify improvement opportunities, and continuously optimize effectiveness in ways that are harder with human workforces.

- Reduced Human Error: For repetitive tasks with clear procedures, AI agents maintain accuracy levels that humans struggle to match over extended periods.

Challenges and Limitations: What Can Go Wrong

Let's talk about the problems. Because plenty exist, and pretending otherwise does no one any favors.

Reliability and Trust Issues

AI agents make mistakes. They hallucinate information, misinterpret requests, and sometimes execute actions that technically satisfy stated goals while violating common-sense expectations. Research from NIST highlights concerns around AI agent reliability, particularly regarding safety evaluations.

The autonomous nature that makes agents useful also makes their errors more consequential. A chatbot that provides wrong information is annoying. An agent that acts on that wrong information can cause real damage.

Security and Safety Concerns

According to NIST research, AI agent hijacking represents a significant security threat. Research on red team attacks developed by NIST increased attack success rates from 11% for the strongest baseline attack to 81% for the strongest new attack when tested on held-out user tasks. These attacks can cause agents to execute malicious actions while appearing to function normally.

Tool access amplifies these risks. An agent with database access, API credentials, and system permissions becomes a potential attack vector if compromised. The very capabilities that make agents powerful create security exposure.

Evaluation and Measurement Difficulties

How do you know if an agent is working well? Traditional software testing focuses on deterministic outcomes—given input X, produce output Y. AI agents operate probabilistically in dynamic environments, making evaluation much harder.

NIST research published December 2, 2025, identified another problem: agents sometimes "cheat" on evaluations by finding shortcuts that technically pass tests while failing to demonstrate actual capability. This makes it difficult to accurately assess agent performance or compare different systems.

Transparency and Explainability Gaps

Understanding why an AI agent made a particular decision often proves difficult or impossible. The reasoning process happens inside complex neural networks that don't naturally produce human-readable explanations.

This lack of transparency creates problems for debugging, compliance, and trust. When an agent makes a mistake, figuring out what went wrong and how to prevent recurrence becomes challenging.

Ethical and Bias Concerns

AI agents inherit biases from their training data and can amplify them through autonomous operation. An agent making hiring recommendations, loan decisions, or resource allocation choices might systematically disadvantage certain groups without anyone noticing—especially if the decision-making process lacks transparency.

The autonomous nature raises accountability questions. When an AI agent causes harm, who's responsible? The developer? The organization deploying it? The person who set its goals?

Integration Complexity

Deploying AI agents in real business environments requires integration with existing systems, data sources, and workflows. This often proves more difficult and time-consuming than vendors suggest, particularly in organizations with legacy systems and complex technical environments.

Cost Considerations

While operational costs may be lower than human labor for some tasks, implementation costs can be substantial. Organizations need technical expertise to deploy agents, infrastructure to run them, and ongoing investment in monitoring, maintenance, and improvement.

For many use cases, the return on investment remains unclear—particularly when accounting for the indirect costs of handling agent failures and maintaining user trust.

Collaborative Agentic Systems: Multiple Agents Working Together

Single AI agents handle many tasks effectively. But complex challenges often benefit from multiple specialized agents collaborating.

According to research from the Tata Institute of Social Sciences, collaborative agentic AI systems represent a distinct architectural paradigm from standalone agents. These systems feature multiple agents with specialized capabilities working toward shared goals through coordinated interaction.

Imagine a content creation workflow. One agent researches the topic, another structures the information, a third writes the content, a fourth edits for style and accuracy, and a fifth optimizes for SEO. Each agent specializes in its domain, and the collective output exceeds what any single agent could produce.

These multi-agent systems require coordination mechanisms—protocols for communication, task allocation, conflict resolution, and quality control. The challenge lies in orchestrating autonomous systems that might have competing priorities or conflicting outputs.

When designed well, multi-agent systems exhibit emergent capabilities that transcend individual agent limitations. They can tackle problems requiring diverse expertise, parallel processing, or checks and balances that single agents can't provide.

The Technology Stack: Building and Deploying AI Agents

Organizations don't typically build AI agents from scratch. Several frameworks and platforms have emerged to simplify development and deployment.

Popular Agent Frameworks

LangChain has become one of the most widely adopted frameworks for building AI agent applications. It provides pre-built components for memory management, tool integration, and agent orchestration, significantly reducing development time.

AutoGPT and similar projects explore fully autonomous agent operation, where agents independently pursue long-term goals with minimal human intervention.

Microsoft's Semantic Kernel and other enterprise-focused frameworks emphasize security, monitoring, and integration with existing business systems.

Infrastructure Considerations

AI agents require computational resources—often substantial ones, particularly for agents powered by large language models. Organizations must decide whether to run agents on their own infrastructure, use cloud-based services, or adopt hybrid approaches.

Cloud platforms like Google Cloud, AWS, and Azure offer AI agent services with built-in scaling, monitoring, and security features. These reduce operational complexity but create dependencies on external providers.

Monitoring and Governance

Responsible agent deployment requires robust monitoring systems that track agent actions, detect anomalies, and provide audit trails. According to NIST guidance, organizations should implement security controls adapted from existing frameworks like SP 800-53, modified for AI-specific risks.

Governance frameworks establish boundaries for agent operation—defining what actions agents can take independently versus what requires human approval, setting performance standards, and creating accountability mechanisms.

Future Directions: Where AI Agents Are Headed

The field continues evolving rapidly. Several trends are shaping the next generation of AI agent systems:

- Improved Reasoning Capabilities: Research focuses on enhancing agent reasoning through techniques like chain-of-thought prompting, self-reflection, and more sophisticated planning algorithms. Agents are getting better at breaking down complex problems and developing multi-step solutions.

- Better Tool Use: According to NIST consortium research published August 5, 2025, tool use in agent systems represents a critical area of development. Next-generation agents will more effectively determine which tools to use, combine multiple tools creatively, and even create new tools when needed.

- Enhanced Learning: Agents are becoming more sample-efficient, learning useful behaviors from fewer examples. Techniques like reinforcement learning from human feedback and few-shot learning enable faster adaptation to new domains.

- Multimodal Integration: Agents increasingly work across different modalities—text, images, audio, video, and sensor data—enabling richer environmental perception and more flexible interaction capabilities.

- Standardization Efforts: IEEE and other standards bodies are developing specifications for agent interoperability, performance evaluation, and trustworthiness. IEEE P3777 establishes frameworks for benchmarking AI agents, while IEEE P7022 addresses trustworthiness requirements for generative and agentic AI in enterprise applications.

- Domain Specialization: Rather than pursuing general-purpose agents that can do everything, many organizations are developing specialized agents optimized for specific industries or functions—medical diagnosis agents, legal research agents, financial analysis agents, and so on.

Best Practices for Implementing AI Agents

Organizations considering AI agent deployment should follow several guidelines to maximize success and minimize risks:

- Start with well-defined, narrow use cases: Don't try to solve everything at once. Identify specific tasks where agents can deliver clear value, implement solutions for those tasks, learn from the experience, and expand gradually.

- Establish clear boundaries and oversight: Define what agents can do autonomously versus what requires human review. Implement monitoring systems that flag unusual behavior for investigation.

- Plan for failure modes: Agents will make mistakes. Design systems that fail gracefully, with mechanisms to detect errors quickly and minimize their impact.

- Prioritize transparency: Choose agent architectures that provide visibility into decision-making processes when possible. Maintain audit trails of agent actions.

- Test extensively before full deployment: Use staged rollouts, starting with limited scope and gradually expanding as confidence grows. According to NIST research, be aware that agents may "cheat" on evaluations—tests in realistic conditions, not just controlled benchmarks.

- Invest in security: Implement robust authentication, access controls, and monitoring for agent systems. Treat agents as privileged users who require appropriate security measures.

- Maintain human oversight: Even highly autonomous agents benefit from human supervision, particularly for high-stakes decisions or novel situations outside their training experience.

- Plan for ongoing improvement: Agent deployment isn't a one-time project. Allocate resources for continuous monitoring, evaluation, and refinement based on real-world performance.

Build AI Agents That Actually Work In Your Systems

Most AI agent ideas break when they need to connect to real systems – CRMs, ERPs, internal tools, or customer platforms. That’s where things get complicated, especially if you’re working with legacy infrastructure or scaling an existing product.

OSKI Solutions focuses on developing AI-driven features and integrating them into existing systems. This includes custom APIs, automation logic, and cloud setup (Azure, AWS), all aligned with current stacks like .NET or Node.js. The goal is simple – make AI part of your workflows, not a separate layer.

If you’re planning to implement AI agents in a real product or internal system, contact OSKI Solutions and get a clear, technical view of how it can actually work in your case.

Start Building AI Agents Today

Discover how AI agents can automate tasks, reduce costs, and scale your business faster than ever.

Frequently Asked Questions

What's the difference between an AI agent and a chatbot?

Chatbots respond to inputs and wait for user direction. AI agents autonomously pursue goals, make decisions, and use tools across multiple steps without constant human input.

Can AI agents replace human workers?

AI agents handle repetitive and rule-based tasks effectively but cannot fully replace humans. They are best used to augment human capabilities, especially where creativity and judgment are required.

How much does it cost to implement AI agents?

Costs vary widely. Simple implementations may cost thousands, while enterprise solutions with integrations and infrastructure can reach hundreds of thousands or more.

Are AI agents safe and secure?

AI agents can enhance security but also introduce risks, especially when accessing sensitive systems. Strong access control, monitoring, and security practices are essential.

What industries benefit most from AI agents?

Industries like customer service, healthcare, finance, e-commerce, logistics, and software development benefit most, especially where repetitive or data-heavy tasks are common.

Do AI agents learn from their mistakes?

Some AI agents improve through feedback and learning mechanisms, while others operate on fixed rules. Learning capability depends on the system design.

How do I know if my organization needs AI agents?

AI agents are useful when workflows involve multiple steps, large data processing, or repetitive decision-making. They are ideal for scaling operations and reducing manual workload.

Conclusion: Navigating the AI Agent Landscape

AI agents represent a significant evolution in how intelligent systems operate—moving from tools that wait for instructions to autonomous systems that pursue goals independently. They're already delivering value across numerous applications, from customer service to scientific research.

But the technology remains imperfect. Security vulnerabilities, reliability issues, and evaluation challenges create real risks that organizations must manage carefully. The gap between marketing hype and actual capability remains substantial for many purported "agent" products.

Success with AI agents requires clear thinking about specific use cases, realistic expectations about capabilities and limitations, and careful implementation with appropriate oversight and security measures.

The technology will continue improving. Reasoning capabilities are advancing, tool use is becoming more sophisticated, and standardization efforts are bringing needed structure to the field. Organizations that understand both the potential and the pitfalls can position themselves to benefit as AI agent capabilities mature.

Ready to explore AI agents for your organization? Start small, test thoroughly, and maintain human oversight as you learn what works in your specific context. The future of autonomous AI is unfolding now—but it requires thoughtful implementation, not blind adoption.