How to Create an AI Agent: 2026 Developer Guide

Quick Summary: Creating an AI agent involves selecting a large language model, choosing a development framework (like LangChain, OpenAI's AgentKit, or no-code platforms like n8n), defining the agent's purpose and tools, implementing a core reasoning loop (typically ReAct pattern), and iteratively testing for reliability. According to NIST's AI Agent Standards Initiative (February 17, 2026), interoperability and security are becoming critical considerations as agent adoption accelerates.

AI agents are changing how businesses automate work. Not chatbots that answer questions, but systems that take action on behalf of users with minimal supervision.

Building one isn't as intimidating as it sounds. The ecosystem has matured rapidly over the past two years, and frameworks like LangChain and OpenAI's AgentKit have made agent development accessible to teams without deep machine learning expertise.

This guide walks through the practical steps of creating an AI agent, from selecting your foundation model to deploying in production. Real talk: most teams overthink this at first. The secret is starting simple and iterating based on what actually breaks.

Understanding What an AI Agent Actually Is

OpenAI defines an agent as a system with instructions (what it should do), guardrails (what it should not do), and access to tools (what it can do) to take action on the user's behalf. That's the working definition most developers use in 2026.

An agent differs from a standard language model interface in three key ways. First, it maintains context across multiple interactions. Second, it can invoke external tools like databases, APIs, or code interpreters. Third, it makes decisions about which actions to take without constant human prompting.

Think of it this way: a chatbot tells you the weather. An agent checks the forecast, notices rain, and automatically reschedules your outdoor meeting.

The Core Components Every Agent Needs

According to research from Universitat Politècnica de Catalunya and Technische Universität München published on arXiv, autonomous LLM agents rely on several fundamental building blocks. The language model itself acts as the reasoning engine. Tool interfaces allow the agent to interact with external systems. Memory systems store conversation history and relevant context.

The orchestration layer ties everything together. This is where frameworks like LangGraph or AgentKit come in. They manage the decision loop: the agent receives input, reasons about what to do, potentially calls tools, evaluates results, and repeats until the task is complete.

Most production agents also include guardrails. These prevent the agent from taking unauthorized actions, leaking sensitive data, or generating inappropriate outputs. More on that later.

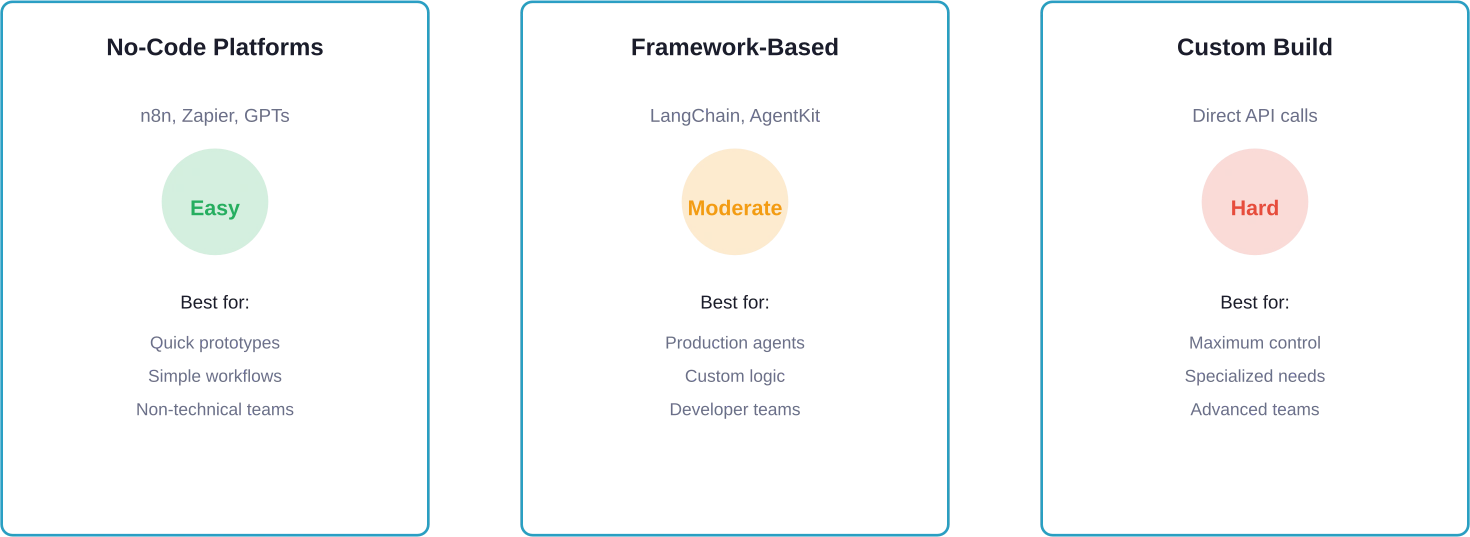

Choosing Your Development Approach

Three main paths exist for building agents in 2026: no-code platforms, high-level frameworks, and low-level custom implementations. Each suits different use cases and skill levels.

No-Code Platforms for Rapid Prototyping

Community members frequently recommend starting with no-code tools if the goal is testing an idea quickly. OpenAI's GPTs provide the lowest barrier to entry. They're excellent for personal assistants and simple workflows. But they lack the flexibility needed for complex enterprise use cases.

n8n offers visual workflow automation with a drag-and-drop interface for building multi-step agents. The platform includes pre-built integrations for hundreds of services. Pricing varies by plan with a free tier available for testing.

Zapier and similar automation platforms have added AI capabilities recently. These work well when the agent workflow is mostly linear: trigger, process with AI, take action. Less suitable when agents need complex decision trees.

Framework-Based Development

LangChain has become a widely-adopted framework for agent development. According to the LangChain technical blog, companies like LinkedIn, Uber, and Klarna have deployed agents built on the framework in production.

According to the LangChain technical blog post 'Building LangGraph: Designing an Agent Runtime from first principles,' the team rethought agent frameworks from first principles. They focused on control and durability rather than hiding complexity behind abstractions. LangGraph, their low-level agent runtime, treats agent logic as a directed graph where developers define exactly how data flows between steps.

OpenAI provides AgentKit as a modular toolkit for building and deploying agents. The platform includes Agent Builder, a visual canvas with starter templates, and deployment options through ChatKit or downloaded SDK code. Official documentation lives in OpenAI's GitHub repositories for both Python and TypeScript.

Anthropic offers similar capabilities through their Agent SDK. The distinction between these frameworks matters less than understanding their shared philosophy: give developers explicit control rather than magical abstractions that fail unpredictably.

When to Build Custom

Custom implementations make sense when frameworks impose too many constraints. High-security environments sometimes require this approach. So do cases with unusual latency requirements or specialized model architectures.

But here's the thing—most teams overestimate how custom they need to go. Frameworks have gotten flexible enough to handle edge cases that would've required custom code two years ago. Start with a framework unless there's a specific technical blocker.

Step-by-Step Agent Creation Process

Building a working agent breaks down into distinct phases. This process assumes a framework-based approach, but the principles apply regardless of implementation path.

Step 1: Define the Agent's Purpose and Scope

According to LangChain's 'How to Build an Agent' blog post, guidance on building agents emphasizes starting with realistic task examples. Not aspirational "the agent will revolutionize customer service" visions. Specific scenarios: "the agent will draft email responses to refund requests" or "the agent will extract key points from meeting transcripts and update Notion."

Narrow scope beats broad capability during initial development. An agent that does three things reliably outperforms one that attempts twenty things poorly. According to the GAIA (General AI Assistants) benchmark (2023), humans score 92% vs LLMs score 15% on complex tasks requiring tool use. That gap narrows when tasks are well-defined and scoped appropriately.

Document what the agent should NOT do as carefully as what it should. These constraints become guardrails later. If the agent handles customer data, specify exactly which fields it can access and which actions require human approval.

Step 2: Select the Foundation Model

Model selection impacts everything downstream. Most production agents in 2026 use either OpenAI's GPT models or Anthropic's Claude. Google's Gemini and open-source alternatives like Llama also see adoption for specific use cases.

Task complexity drives model choice. Simple classification or extraction tasks often work fine with smaller, faster models. Complex reasoning or code generation typically requires frontier models. Cost matters too—running GPT-4 on high-volume tasks gets expensive quickly. Check official pricing pages for current rates since these change frequently.

Many successful implementations use multiple models. A smaller model handles initial triage, routing complex cases to a more capable (and expensive) model. This hybrid approach balances performance and cost.

Step 3: Design the Tool Interface

Tools extend what the agent can do beyond text generation. Common tools include database queries, API calls, web searches, code execution, and file operations.

Each tool needs a clear interface definition. The agent must understand what the tool does, what inputs it requires, and what outputs to expect. Most frameworks use function calling or structured output schemas for this. OpenAI's structured outputs feature ensures the model returns data in a strict JSON schema, making tool integration more reliable.

|

Tool Type |

Common Use Cases |

Complexity |

|---|---|---|

|

Database queries |

Retrieving customer data, searching records |

Medium |

|

API calls |

Sending emails, creating tickets, posting updates |

Low to Medium |

|

Web search |

Finding current information, research |

Low |

|

Code execution |

Data analysis, calculations, transformations |

High |

|

File operations |

Reading documents, generating reports |

Medium |

|

Custom integrations |

Business-specific systems |

Varies |

Start with one or two tools. Add more only after the basic loop works reliably. Tool sprawl is a common failure mode—agents given too many options make poor decisions about which to use.

Step 4: Implement the Reasoning Loop

The reasoning loop determines how the agent decides what to do next. The ReAct pattern (Reasoning + Acting) has become standard. The agent observes the current state, reasons about what action to take, executes that action, observes the result, and repeats.

LangChain's create_agent function provides a proven ReAct implementation. AgentForge, a framework documented in arXiv research (arXiv:2601.13383, submitted January 19, 2026), formalizes skill composition as a directed acyclic graph (DAG). This approach represents arbitrary sequential and parallel task workflows while maintaining clarity about execution flow.

The critical implementation detail: maintaining proper context at each step. According to LangChain's framework philosophy blog, the hard part of building reliable agents is ensuring the language model has appropriate context for every decision. This includes conversation history, tool results, intermediate reasoning steps, and any relevant background data.

Step 5: Add Memory and State Management

Agents need to remember previous interactions and maintain state across turns. Short-term memory stores the current conversation. Long-term memory might include user preferences, past decisions, or learned patterns.

Implementation varies by framework. LangGraph provides built-in state management through checkpoints. Custom implementations often use databases or vector stores. For agents handling sensitive data, careful consideration of what gets persisted and how long it's retained matters for both performance and compliance.

State management also determines durability. According to LangChain's design philosophy, production agents must handle interruptions gracefully. If the system crashes mid-task, can the agent resume where it left off? Durable execution requires a persisting state at each step.

Step 6: Implement Guardrails and Safety Measures

Guardrails prevent agents from taking harmful or unintended actions. OpenAI's agent documentation recommends multiple layers: input validation, output filtering, action approval workflows, and monitoring.

Input guardrails catch malicious or nonsensical requests before the agent processes them. Output guardrails ensure generated content meets safety and quality standards. Structured outputs help here—constraining the model to specific JSON schemas reduces the risk of unexpected formats.

For high-stakes actions like financial transactions or data deletions, human-in-the-loop approval adds a safety layer. The agent proposes the action, a human reviews and approves, then the agent executes. This slows the workflow but dramatically reduces risk.

The Auton Agentic AI Framework research emphasizes governance patterns for agent communities. Access control limits which agents can perform which actions. Audit trails log all decisions and actions for later review. Compliance checks ensure agents operate within regulatory constraints.

Step 7: Test Systematically

Agent testing differs from traditional software testing. Nondeterministic outputs make simple pass/fail assertions inadequate. Instead, testing focuses on reliability, safety, and task completion rates.

LangChain's agent testing guide recommends starting with a set of realistic test cases that cover common scenarios and edge cases. Run each case multiple times—language models produce different outputs on identical inputs. Track completion rates, failure modes, and execution time.

Red team the agent deliberately. Try to make it do things it shouldn't. Probe for data leakage, unauthorized actions, or nonsensical responses. These adversarial tests reveal weaknesses that normal testing misses.

According to AgentForge evaluation benchmarks conducted in January 2026, comprehensive testing across four dimensions provides the most insight: task completion accuracy, development efficiency, runtime performance, and resource utilization.

Step 8: Deploy and Monitor

Production deployment introduces new considerations. Latency becomes critical—users won't wait 30 seconds for a response. Cost per interaction matters at scale. Reliability requirements tighten dramatically.

Most frameworks offer deployment options. OpenAI's AgentKit allows embedding workflows into websites via ChatKit or running SDK code on custom infrastructure. LangChain integrates with LangSmith for monitoring and optimization. Anthropic's Agent SDK provides production deployment capabilities with tracing.

Monitoring production agents requires specialized tooling. Track completion rates, average execution time, tool usage patterns, and failure modes. LangSmith and similar platforms provide dashboards for this. Set up alerts for unusual patterns—sudden drops in completion rate or spikes in tool failures often indicate problems.

The NIST AI Agent Standards Initiative, announced February 17, 2026, aims to ensure agent interoperability and security. As standards emerge, compliance may become a deployment consideration, particularly for regulated industries.

Turn Your AI Agent Into A Working Product

Building an AI agent is one thing. Making it work inside your product or internal systems is where most teams get blocked, especially when you need to connect it to CRMs, ERPs, payment systems, or legacy infrastructure.

OSKI Solutions works with B2B companies that need AI to function inside real environments, not as a separate tool. They handle full-cycle development and integration – from AI-driven features (C#, Python) to APIs, cloud setup (Azure, AWS), and system connections across .NET or Node.js stacks. The focus stays on making AI agents reliable, scalable, and usable in day-to-day operations.

If you're building an AI agent and need it to actually run inside your product or workflows, contact OSKI Solutions and discuss your setup.

Turn AI Into Action

Don’t just learn about AI agents — start using them to automate workflows and drive real results.

Common Frameworks and Platforms Compared

Choosing the right framework accelerates development. Each option offers different tradeoffs between flexibility, ease of use, and production readiness.

|

Framework |

Best For |

Strengths |

Considerations |

|---|---|---|---|

|

LangChain/LangGraph |

Production agents with complex workflows |

Mature ecosystem, flexible, strong community support |

Steeper learning curve than no-code options |

|

OpenAI AgentKit |

Teams already using OpenAI models |

Visual builder, integrated deployment, official SDK |

Tighter coupling to OpenAI ecosystem |

|

Anthropic Agent SDK |

Claude-based implementations |

Code-as-library approach, production-focused |

Newer than alternatives, evolving rapidly |

|

n8n |

No-code automation and simple agents |

Visual interface, extensive integrations, accessible |

Less suitable for complex reasoning flows |

|

Google Vertex AI Agent Builder |

Enterprise deployments on Google Cloud |

Multi-agent orchestration, enterprise features, scalability |

Requires Google Cloud infrastructure |

|

Custom implementation |

Specialized requirements or maximum control |

Complete flexibility, no framework constraints |

Requires building infrastructure from scratch |

LangChain and LangGraph

LangChain launched nearly two years ago and has evolved based on production use at scale. Companies like LinkedIn, Uber, and Klarna have deployed agents built on the framework. The team deliberately chose minimal abstraction after learning that heavy abstractions fail unpredictably in production.

LangGraph, the underlying runtime, treats agent logic as a directed graph. Developers define nodes (computation steps) and edges (transitions between steps). This makes execution flow explicit rather than hidden inside framework magic. Debugging becomes straightforward—trace which path the agent took through the graph.

The framework is open source with standard integrations for any model or tool. This neutrality matters. Switching from OpenAI to Anthropic or adding a new database doesn't require rewriting the agent.

OpenAI AgentKit and Agent Builder

OpenAI provides AgentKit as a complete toolkit for agent creation. Agent Builder provides a visual canvas where developers drag and drop nodes to compose workflows. Start from templates, configure inputs and outputs, preview runs with live data.

When ready to deploy, embed the workflow into a website using ChatKit or download the SDK code to run it independently. The official documentation and GitHub repositories (Python and TypeScript) provide implementation details.

The tight integration with OpenAI's ecosystem is both a strength and limitation. Teams already standardized on OpenAI models benefit from seamless integration. Those wanting model flexibility may find the coupling constraining.

Anthropic Agent SDK

Anthropic's offering treats agent code as a library rather than a framework. This philosophy aligns with their "code as interface" approach across products. Developers write standard Python or TypeScript with agent capabilities imported as modules.

The SDK emphasizes production concerns: error handling, retries, streaming, and tracing. Less focus on visual builders or no-code approaches. This suits teams comfortable with code who want production-grade tooling without framework overhead.

No-Code Platforms

n8n dominates discussions about no-code agent building based on community feedback. The platform's workflow automation capabilities extend naturally to agent-like behavior. Trigger, LLM reasoning step, tool execution, conditional logic, output.

Google's Vertex AI Agent Builder targets enterprise use cases with features for multi-agent orchestration, governance, and scale. The platform requires Google Cloud infrastructure but provides enterprise-grade capabilities for teams already in that ecosystem.

These platforms work best when workflows are relatively linear and tool integrations are straightforward. Complex reasoning loops or custom logic often hit platform limitations, requiring migration to code-based frameworks.

Advanced Patterns and Considerations

Multi-Agent Systems

Some tasks benefit from multiple specialized agents rather than one generalist. A customer service system might employ separate agents for billing questions, technical support, and account management. Each agent has focused expertise and tools relevant to its domain.

Research on architecting agentic communities emphasizes governance and control patterns for multi-agent systems. Coordination mechanisms determine how agents communicate. Handoff protocols specify when one agent transfers a task to another. Access control limits which agents can perform which operations.

The Auton Agentic AI Framework provides a declarative architecture for multi-agent systems. According to research from Snap, the field is transitioning from Generative AI (probabilistic content creation) to Agentic AI (autonomous systems executing actions in external environments). This shift requires new architectural patterns.

Retrieval-Augmented Generation for Agents

RAG extends agent capabilities by connecting them to knowledge bases. When an agent needs information beyond its training data, it queries a vector database or document store, retrieves relevant content, and incorporates that context into its reasoning.

LangChain's RAG agent tutorial demonstrates implementation. The agent receives a question, determines whether external knowledge is needed, formulates a search query, retrieves relevant documents, synthesizes information, and generates a response. This pattern is particularly powerful for enterprise agents that need access to internal documentation, product catalogs, or customer data.

Workflow vs. Agent Tradeoffs

Not everything needs full agent autonomy. LangChain's framework philosophy makes an important distinction: workflows provide predictable, deterministic execution paths. Agents make dynamic decisions about what to do next.

Many successful implementations blend both approaches. The overall structure follows a workflow—steps execute in a defined sequence. Within certain steps, an agent makes decisions about tool usage or content generation. This hybrid approach balances reliability (workflows are predictable) with flexibility (agents adapt to context).

According to LangChain's guidance, most production systems exist on a spectrum between pure workflow and pure agent. Teams often start with more workflow structure and gradually introduce agent autonomy as they build confidence in reliability.

Production Deployment Checklist

Before deploying an agent to production, several critical considerations deserve attention beyond basic functionality.

Cost Management

Language model API costs scale with token usage. Each agent interaction consumes tokens for the prompt (instructions, context, conversation history) and completion (the model's response). Complex agents with many tool calls and reasoning steps can consume thousands of tokens per task.

Monitor token usage closely during testing. Set budget alerts through API providers. Consider caching strategies for repeated queries. Some teams use smaller models for simple tasks and route only complex cases to expensive models.

Security Considerations

Agents that interact with external systems need careful security design. Never expose API keys or credentials in prompts. Use secure credential management. Implement least-privilege access—agents should have permission only for actions they genuinely need.

The NIST AI Agent Standards Initiative emphasizes security as a core concern. According to their February 2026 announcement, ensuring agents function securely on behalf of users is critical for adoption confidence and ecosystem interoperability.

Compliance and Privacy

Agents processing personal data must comply with privacy regulations like GDPR or CCPA. Document what data the agent collects, how long it's retained, and how it's used. Provide mechanisms for users to access or delete their data.

IEEE published standards for responsible AI licensing (IEEE 2840-2024) and autonomous system governance. While these don't always directly apply to enterprise agents, they provide useful frameworks for thinking about responsible deployment.

Real-World Use Cases and Examples

Seeing concrete examples helps solidify understanding. Several patterns emerge across successful agent implementations.

Customer Support Automation

Customer support agents handle common questions, route complex issues to humans, and maintain consistent quality. A typical implementation might include tools for searching knowledge bases, accessing customer account data, creating support tickets, and escalating to human agents.

The agent first attempts to answer questions using documentation. If that fails, it checks whether account-specific data might help. For complex technical issues beyond its capability, it creates a detailed ticket for human review including conversation history and attempted solutions.

Data Analysis and Reporting

Data analysis agents query databases, perform calculations, generate visualizations, and produce reports. Tools typically include SQL execution, Python code interpreters, and charting libraries.

An example workflow: the user asks for quarterly sales trends. The agent formulates appropriate SQL queries, executes them against the database, analyzes results using Python, generates charts, and composes a summary report with key insights and visualizations.

Email and Communication Management

Email agents draft responses, prioritize messages, extract action items, and route requests. LangChain's email agent guide walks through building this use case from idea to production deployment.

The agent monitors incoming email, classifies urgency and topic, drafts appropriate responses for routine requests, and flags complex messages for human attention. Over time, it learns patterns about which responses work well and which types of messages require human judgment.

Troubleshooting Common Issues

Building agents involves debugging unique problems. Several issues appear frequently enough to warrant specific attention.

Inconsistent Tool Selection

Sometimes agents choose the wrong tool for a task. This usually indicates unclear tool descriptions or overlapping capabilities. Solution: make tool descriptions more distinct and include examples of when to use each tool. Reduce the number of available tools if possible.

Context Overflow

Long conversations or complex tasks can exceed model context windows. The agent loses track of earlier information or fails entirely. Solution: implement context summarization, store detailed history in external memory, and provide only relevant context for each step.

Infinite Loops

Agents occasionally get stuck repeating the same action. This happens when the agent doesn't recognize that an approach isn't working. Solution: implement maximum iteration limits, track whether progress is being made, and include explicit failure conditions that trigger different strategies.

Slow Execution

Multi-step reasoning takes time. Each LLM call adds latency. Solution: reduce unnecessary steps, use streaming where possible, consider parallel tool execution for independent operations, and manage user expectations about response time.

The Future of Agent Development

Agent capabilities are advancing rapidly. Several trends are worth tracking as they'll impact development approaches.

According to the GAIA (General AI Assistants) benchmark (2023), humans score 92% while LLMs score 15% on complex tasks requiring tool use. This gap is narrowing as models improve and agent frameworks evolve.

The NIST AI Agent Standards Initiative, launched in February 2026, will likely influence how agents are built, deployed, and certified. Interoperability standards may enable agents built on different frameworks to work together seamlessly. Security standards could become requirements for regulated industries.

Research into agent architecture continues. The AgentForge framework formalized skill composition as DAGs and proved expressiveness for representing arbitrary workflows. The Auton framework provides declarative specifications for governance and runtime execution. These academic advances filter into production frameworks over time.

Multi-agent systems are gaining attention. Rather than building one omniscient agent, teams are deploying specialized agent communities that collaborate on complex tasks. This mirrors how human organizations work and may prove more scalable than monolithic agents.

Frequently Asked Questions

What programming skills are needed to create an AI agent?

Basic programming knowledge is helpful but not always required. No-code platforms like n8n, OpenAI GPTs, or agent builders make it possible to create simple agents without writing code. For production-ready agents with custom workflows, Python or TypeScript is usually the most useful skill set.

How much does it cost to build and run an AI agent?

Costs depend on complexity, tooling, and usage volume. Development can be nearly free with open-source frameworks or free tiers, while runtime costs usually come from model API usage. Small tests may cost only a few dollars, while production agents can cost hundreds or thousands per month.

Can AI agents work offline or do they always need internet connectivity?

Most AI agents need internet access because they rely on hosted language models, external APIs, and online data sources. Some can run locally with offline models, but that usually requires powerful hardware and may reduce performance compared to cloud-based systems.

How do I ensure my AI agent doesn't make mistakes or take wrong actions?

You cannot eliminate mistakes entirely, but you can reduce risk with guardrails, structured outputs, validation, human approval for sensitive actions, thorough testing, and close monitoring. It is best to begin with low-risk workflows and expand only after proving reliability.

Which framework should I use for my first AI agent project?

For simple experiments, no-code tools like OpenAI GPTs or n8n are often the easiest starting point. For custom or production workflows, LangChain is a popular option because it offers flexibility and a mature ecosystem. The right choice depends on your technical skills and project goals.

How long does it take to build a working AI agent?

A basic agent can be built in a few hours using no-code tools. A more reliable production-ready agent with custom logic, testing, and deployment usually takes several weeks. More advanced multi-agent systems can take months depending on scope and complexity.

What's the difference between an AI agent and a chatbot?

A chatbot mainly answers questions in conversation, while an AI agent can take actions, use tools, and complete tasks autonomously. For example, a chatbot might tell you the weather, while an agent could check the forecast and automatically reschedule an outdoor meeting if rain is expected.

Taking the Next Steps

Creating an AI agent is more accessible now than ever before. The ecosystem has matured dramatically. Frameworks provide proven patterns. Documentation and examples are abundant. The technology works well enough for real production use.

Start simple. Pick one specific task the agent should accomplish. Choose a framework that matches technical skill level and requirements. Build a basic version quickly. Test it thoroughly. Deploy cautiously. Monitor closely. Iterate based on what actually breaks rather than theoretical concerns.

The gap between prototype and production involves addressing reliability, security, cost, and scale. But these challenges are manageable with systematic approaches and the right tooling. Companies are successfully deploying agents for customer support, data analysis, content generation, and process automation.

According to NIST's AI Agent Standards Initiative, agent adoption is accelerating rapidly. Organizations that build competency in agent development now will be well-positioned as the technology becomes standard infrastructure. The tools exist. The patterns are established. The time to build is now.

Ready to create something? Pick a framework, define a narrow task, and start building. The first working prototype often arrives faster than expected—and reveals exactly what to focus on next.