How to Create AI Agents: Complete 2026 Guide

Quick Summary: Creating AI agents involves selecting the right foundation model (like GPT-4 or Claude), choosing a development framework (such as LangChain or AutoGPT), defining clear tasks and goals, integrating external tools and data sources, and iteratively testing and refining agent behavior. Both no-code platforms and code-based approaches are viable depending on technical expertise.

AI agents represent a fundamental shift from simple chatbots to autonomous systems capable of reasoning, planning, and executing complex tasks with minimal human oversight. Unlike traditional AI that waits for prompts, agents take initiative, break down objectives into steps, and orchestrate multiple tools to accomplish goals.

The landscape has matured significantly. Foundation models now power agents that handle everything from customer service to software development. But building effective agents requires more than plugging in an API.

This guide cuts through the noise. Whether starting with no-code platforms or diving into custom development, these strategies provide a practical roadmap for creating agents that actually work.

Understanding AI Agent Architecture

Before building anything, it helps to understand what distinguishes an agent from a standard language model implementation. The difference lies in autonomy and tool use.

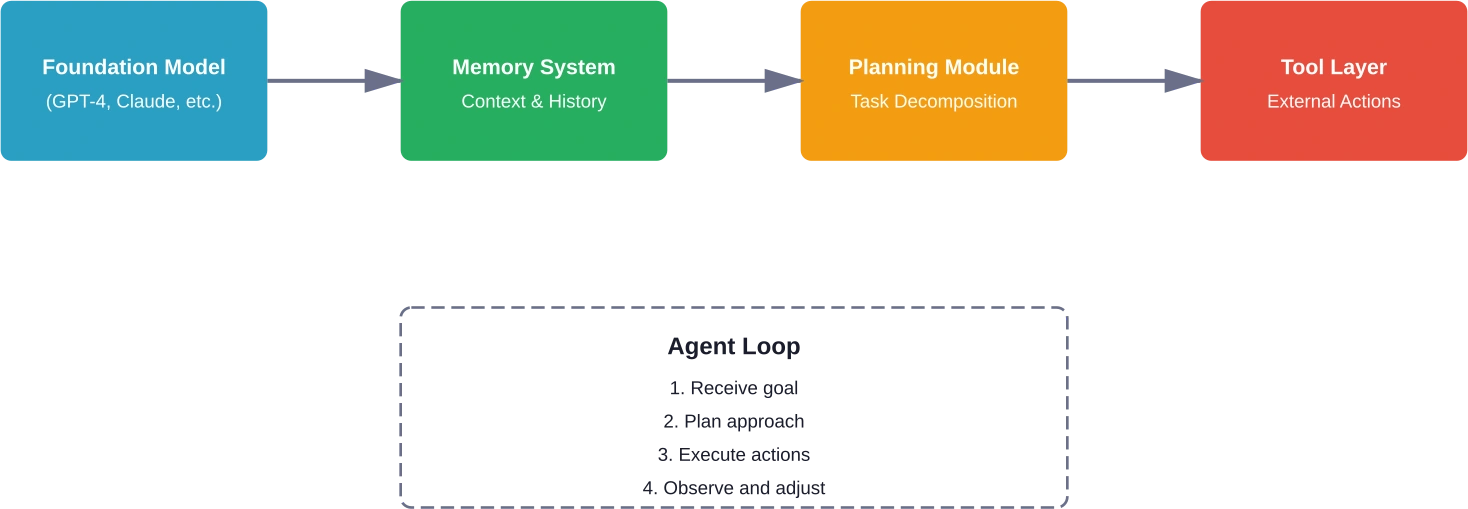

An AI agent combines several core components that work together. At the center sits a foundation model—typically a large language model that provides reasoning capabilities. According to research published on arXiv in the Agent Design Pattern Catalogue (Liu et al., May 2024), these foundation model-based agents leverage distinguished reasoning and language processing capabilities to take proactive, autonomous actions.

Agents need memory systems to track conversation history and maintain context across interactions. They require planning modules that decompose complex objectives into manageable subtasks. And critically, they need tool integration layers that allow interaction with external systems, databases, and APIs.

The architectural pattern typically follows this flow: receive goal, plan approach, execute actions using available tools, observe results, adjust strategy, repeat until objective achieved.

Key Architectural Components

Memory comes in two flavors: short-term (conversation buffer) and long-term (vector database storage). Short-term memory keeps recent exchanges accessible. Long-term memory enables retrieval of relevant past interactions or domain knowledge.

Planning mechanisms vary from simple chain-of-thought prompting to sophisticated tree-search algorithms. The agent must reason about which tools to invoke, in what sequence, with which parameters.

Tool interfaces define how agents interact with the outside world. These might include search engines, calculators, code interpreters, database queries, or custom API endpoints. The OpenAI API now provides structured tool-calling capabilities that make this integration more reliable.

Choosing the Right Foundation Model

Model selection significantly impacts agent capabilities and cost structure. Different models excel at different tasks, and the choice depends on specific use case requirements.

GPT-4 and its variants offer strong reasoning capabilities and broad tool-use competence. Claude models from Anthropic provide excellent instruction-following and tend to produce more conservative, safety-oriented outputs. Open-source alternatives like Llama and Mistral offer cost advantages but may require more prompt engineering.

Consider these factors when selecting a model: reasoning depth needed, response latency requirements, context window size, cost per token, tool-calling reliability, and domain-specific performance.

For agents handling complex multi-step reasoning, models with stronger planning capabilities justify higher costs. For high-volume simple tasks, smaller efficient models make economic sense.

Model Performance Characteristics

Context window matters more for agents than chatbots. Agents accumulate tool outputs, intermediate reasoning steps, and memory retrievals. A 32k token context fills quickly. Models with 128k+ windows provide more operational headroom.

Tool-calling accuracy varies significantly across models. Some reliably format function calls and handle multi-tool scenarios. Others struggle with complex parameter passing or sequential tool use. Testing with representative workflows reveals these differences quickly.

Latency affects agent viability for real-time applications. According to DeepMind research on multi-agent systems (specifically the study on the game Quake III Arena Capture the Flag), the agents were trained with an artificial reaction latency of 267 milliseconds to match human performance, and despite this constraint, they reached and exceeded human-level performance. For customer-facing agents, response times under two seconds typically provide acceptable user experience.

No-Code Platforms for Agent Creation

Not everyone needs to write code to build effective agents. Several platforms now provide visual interfaces for agent development, making the technology accessible to non-technical teams.

These tools handle the underlying infrastructure, model integration, and tool orchestration through drag-and-drop interfaces. Community discussions frequently mention these platforms as entry points for agent development.

Popular No-Code Options

n8n provides workflow automation with AI agent capabilities built in. The platform connects to hundreds of services and allows chaining together API calls, data transformations, and model invocations visually. The free tier supports basic agent workflows, with paid plans starting at $20/month for more advanced features.

OpenAI's GPT builder offers the simplest starting point for basic personal assistants. These custom GPTs handle straightforward tasks well but lack advanced multi-step reasoning and external tool integration compared to full agent frameworks.

Google Cloud's Vertex AI Agent Builder provides enterprise-grade agent development without code. The platform integrates with Google's ecosystem and offers built-in compliance features relevant for regulated industries.

Salesforce has integrated agent-building capabilities directly into their platform, allowing businesses to create AI agents that interact with CRM data and execute business processes.

|

Platform |

Best For |

Technical Level |

Key Strength |

|---|---|---|---|

|

n8n |

Workflow automation |

Low to Medium |

Extensive integrations |

|

OpenAI GPT Builder |

Simple assistants |

None required |

Fastest setup |

|

Vertex AI Agent Builder |

Enterprise deployments |

Medium |

Google ecosystem integration |

|

Zapier (with AI) |

Business process automation |

Low |

User-friendly interface |

The trade-off with no-code platforms involves flexibility. These tools excel at common patterns but struggle with highly custom logic or novel architectural requirements. For standard use cases—customer support, data retrieval, simple task automation—they provide rapid deployment paths.

Code-Based Agent Frameworks

Developers seeking maximum control and customization turn to code-based frameworks. These libraries provide building blocks for agent construction while leaving architectural decisions to the implementer.

LangChain emerged as an early leader in this space. The framework provides abstractions for chains (sequential operations), agents (autonomous decision-makers), and memory management. It supports multiple model providers and includes pre-built integrations for common tools.

AutoGPT pioneered the autonomous agent pattern, where the system pursues goals with minimal guidance. While the original project faced reliability challenges, it demonstrated the potential for highly autonomous systems and influenced subsequent frameworks.

Framework Comparison

LangChain offers comprehensive documentation and a large community. The framework's modular design allows mixing components as needed. However, some developers find the abstractions heavy for simple use cases.

LlamaIndex specializes in retrieval-augmented generation patterns. For agents that need to work with large document collections or knowledge bases, LlamaIndex provides optimized indexing and query capabilities.

Semantic Kernel from Microsoft integrates tightly with Azure services and provides enterprise-focused features like governance and audit trails. Research on agentic communities emphasizes the importance of such governance patterns for production deployments.

Haystack focuses on production-ready NLP applications with strong agent support. The framework emphasizes pipeline clarity and debugging tools.

|

Framework |

Primary Use Case |

Language |

Learning Curve |

|---|---|---|---|

|

LangChain |

General-purpose agents |

Python, JavaScript |

Medium |

|

LlamaIndex |

Knowledge retrieval |

Python |

Low to Medium |

|

Semantic Kernel |

Enterprise integration |

Python, C# |

Medium to High |

|

AutoGPT |

Autonomous exploration |

Python |

Medium |

|

Haystack |

Production NLP pipelines |

Python |

Medium |

Step-by-Step Agent Creation Process

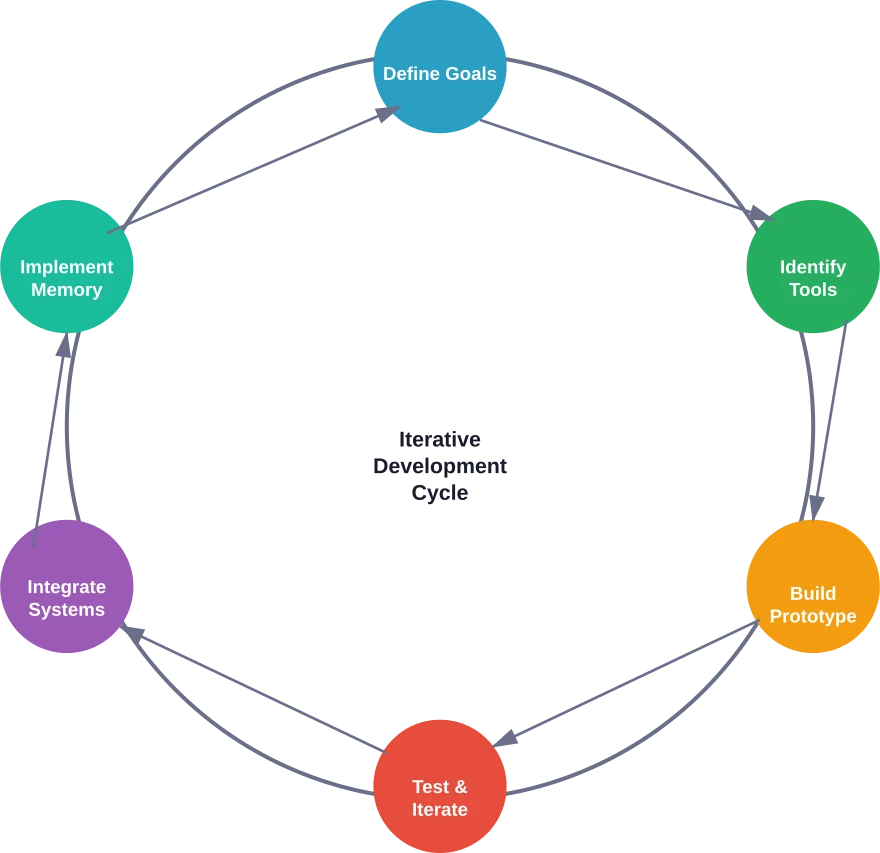

Regardless of the chosen platform or framework, creating effective agents follows a consistent pattern. These steps apply whether building with code or using visual tools.

Define Clear Objectives

Start by articulating exactly what the agent should accomplish. Vague goals produce unreliable agents. Specific, measurable objectives enable focused development and meaningful evaluation.

Bad objective: "Help users with questions." Good objective: "Answer product documentation questions using the knowledge base, escalating to human support when confidence is below 85% or when refund requests are mentioned."

Document success criteria upfront. What does good performance look like? How will the agent be measured? What failure modes are unacceptable?

Identify Required Tools and Data Sources

Map out which external capabilities the agent needs. Does it need to search the web? Query databases? Execute code? Call internal APIs? Send emails?

Each tool integration adds complexity and potential failure points. Start with the minimum viable set. Additional tools can be added iteratively as needs become clear.

Data sources deserve special attention. What information does the agent need access to? How will that data be kept current? What permissions and security constraints apply?

Build Initial Prototype

Start simple. Create a minimal version that demonstrates the core workflow without all the bells and whistles. This prototype validates assumptions and reveals unexpected challenges early.

For no-code builders, this means setting up the basic workflow with placeholder logic. For code-based development, implement the agent loop and one or two tool integrations.

Test with realistic scenarios immediately. Theoretical planning only goes so far. Real interactions expose issues that seem obvious in hindsight but are invisible during design.

Implement Memory and Context Management

Agents need to remember previous interactions and maintain context across multi-turn exchanges. How much history should be retained? When should context be cleared?

Short-term memory typically stores the recent conversation in the prompt. Long-term memory often uses vector databases like Pinecone, Weaviate, or Chroma for semantic retrieval of relevant past interactions.

Memory implementation significantly affects costs and performance. More context improves quality but increases token usage and latency. Finding the right balance requires experimentation with actual use cases.

Integrate Tools and APIs

Tool integration represents where agents provide real business value. The agent can now act on behalf of users, not just provide information.

Structured tool definitions improve reliability. OpenAI's function calling feature, for example, allows defining tools with typed parameters and descriptions. The model then generates properly formatted calls that can be executed programmatically.

Error handling matters immensely here. APIs fail. Databases become unavailable. Network requests timeout. Robust agents anticipate these failures and respond appropriately—retrying when sensible, falling back to alternatives when available, or clearly communicating limitations when necessary.

Test and Iterate

Agent behavior emerges from the interaction between model, prompts, tools, and data. Small changes can have outsized effects. Systematic testing reveals these dynamics.

Create a test suite covering common scenarios, edge cases, and known failure modes. Run this suite after each modification to catch regressions.

Real-world testing provides different insights than synthetic tests. Beta users discover novel interaction patterns and surface assumptions baked into the design. Plan for multiple iteration cycles based on actual usage feedback.

Design Patterns from Research

Academic research on agent architectures has identified recurring design patterns that solve common challenges. According to research published on arXiv in the Agent Design Pattern Catalogue (Liu et al., May 2024), these patterns provide architectural guidance for foundation model-based agents. In this paper, the authors present a pattern catalogue consisting of 17 architectural patterns that address key design challenges.

The Reflection pattern involves having the agent critique its own outputs before finalizing responses. This self-evaluation step catches logical errors and improves output quality, though it increases token usage and latency.

The Tool Selection pattern addresses how agents choose among multiple available tools. Approaches range from simple rule-based selection to learned policies that predict which tool will most likely advance toward the goal.

The Memory Retrieval pattern determines how agents surface relevant past information. Semantic search over vector embeddings provides one approach. Structured database queries offer another. Hybrid methods combine both.

Multi-Agent Patterns

Some problems benefit from multiple specialized agents collaborating. Research on architecting agentic communities using design patterns suggests governance structures for such systems.

The Supervisor pattern designates one agent as coordinator, delegating subtasks to specialist agents and integrating results. This mirrors human team structures and scales better than having a single agent handle everything.

The Debate pattern has multiple agents propose solutions, critique each other's proposals, and converge toward consensus. This adversarial approach can surface flaws in reasoning that single-agent systems miss.

According to NIST's AI Agent Standards Initiative announced on February 17, 2026, establishing standards for agent interoperability and security represents a critical priority as these systems become more prevalent.

Practical Implementation Tips

Real-world agent development surfaces challenges that aren't obvious from tutorials or documentation. These practices help navigate common pitfalls.

Start with Narrow Scope

Resist the temptation to build an agent that does everything. Focused agents that excel at specific tasks outperform generalists attempting to handle all scenarios. Scope can expand after core functionality proves reliable.

Invest in Observability

Agents make decisions autonomously. Understanding why an agent took a particular action becomes crucial for debugging and improvement. Log reasoning steps, tool calls, and decision points. This telemetry proves invaluable during troubleshooting.

Implement Guardrails

Autonomous systems need constraints. Define what actions are never acceptable. Implement checks that prevent harmful behaviors. According to NIST's AI Risk Management Framework, establishing trust in AI technologies requires proactive risk mitigation.

Content filtering prevents generating harmful outputs. Rate limiting prevents runaway tool usage. Budget caps prevent unexpected costs. Human approval requirements for high-stakes actions provide safety nets.

Handle Errors Gracefully

Everything fails eventually. Networks become unavailable. APIs return errors. Models produce malformed outputs. Robust agents anticipate these failures and respond appropriately rather than crashing silently.

Clear error messages help users understand what went wrong and what they might do differently. Automatic retries with exponential backoff handle transient failures. Fallback strategies provide degraded functionality when preferred approaches fail.

Optimize for Cost and Latency

Production agents process thousands or millions of interactions. Token costs accumulate quickly. Latency impacts user experience significantly.

Prompt engineering reduces unnecessary verbosity. Caching frequently-used results avoids redundant processing. Smaller models handle routine tasks, reserving expensive models for complex reasoning. Streaming responses improve perceived responsiveness.

Move From AI Agent Idea To Working Implementation

Most guides explain how to create an AI agent, but skip what makes it usable in a real product. Defining how it fits into user flows, structuring backend logic, and making it reliable under real conditions is where things usually get difficult.

OSKI Solutions works with companies developing AI-based functionality within their products or internal systems. This includes backend development, API structure, and integrating AI-driven services (C#, Python) into existing stacks like .NET or Node.js, with deployment across Azure or AWS. The focus is on making AI agents stable, maintainable, and aligned with how the product actually works.

If you're building an AI agent and need it to function properly in a real product or workflow, contact OSKI Solutions and discuss your setup.

AI Agents. Real Results.

Deploy smart agents that work for you 24/7.

Monitoring and Maintenance

Deployment isn't the finish line. Agents require ongoing monitoring and maintenance to remain effective as usage patterns evolve and external systems change.

Track key metrics: success rate, average latency, token usage, error frequency, user satisfaction scores. Establish baselines and set up alerts for significant deviations.

Usage patterns shift over time. Queries that were rare initially might become common. New edge cases emerge. Regular review of interaction logs surfaces these trends and informs improvement priorities.

Model updates from providers can change behavior in subtle ways. After updating to a new model version, regression testing ensures critical functionality remains intact.

Common Challenges and Solutions

Several challenges appear consistently across agent implementations. Recognizing these patterns helps address them efficiently.

Context Window Limitations

Even large context windows fill up during complex multi-step tasks. The agent accumulates tool outputs, intermediate reasoning, and conversation history until the context limit is reached.

Solutions include summarizing older context periodically, storing details in external memory and retrieving only relevant portions, and breaking large tasks into smaller sub-tasks with fresh context for each.

Tool Calling Reliability

Models sometimes generate malformed tool calls with incorrect parameters or non-existent function names. This happens more frequently with smaller models or when tool descriptions are unclear.

Improving tool descriptions with explicit examples reduces errors. Input validation catches malformed calls before execution. Providing error feedback to the agent allows self-correction attempts.

Hallucination in Tool Outputs

Agents occasionally fabricate tool results rather than actually calling tools. The model "imagines" what a search result might contain instead of executing the search.

Strictly enforcing the tool-calling protocol helps. The system should refuse to proceed without actual tool execution. Clear prompting that distinguishes planning from execution reduces confusion.

Excessive Tool Usage

Some agents develop patterns of calling tools unnecessarily, driving up costs and latency. The agent might search repeatedly for information already in context or try every tool sequentially.

Explicit reasoning steps before tool calls improve efficiency. Prompts that encourage considering available information before seeking more help. Usage budgets that force prioritization when approaching limits.

Security and Compliance Considerations

Agents interact with external systems and data, raising security implications that require careful attention. Research on agentic communities emphasizes governance patterns including access control, audit trails, and compliance monitoring.

Principle of least privilege applies: grant agents only the minimum permissions needed for their tasks. An agent booking meeting rooms doesn't need access to financial systems.

Input validation prevents prompt injection attacks where users attempt to override system instructions or access unauthorized data. Treating user inputs as untrusted data and sanitizing appropriately provides defense.

Audit logging tracks what actions agents take on whose behalf. This traceability proves essential for compliance requirements and incident investigation.

Data handling follows regulations like GDPR or HIPAA depending on the domain. Agents must not expose sensitive information inappropriately or retain data longer than permitted.

Future Considerations

The agent landscape continues evolving rapidly. Several trends shape where the technology heads next.

Multimodal agents that handle images, audio, and video alongside text expand use cases significantly. Agents that can watch screen recordings to debug issues or analyze product images to suggest solutions unlock new capabilities.

Longer-running agents that pursue goals over hours or days rather than single conversational turns enable more ambitious applications. These systems require more sophisticated planning and error recovery mechanisms.

Standards for agent interoperability would allow agents from different vendors to collaborate seamlessly. NIST's AI Agent Standards Initiative works toward this vision, though widespread adoption remains years away.

Improved reasoning capabilities in foundation models will reduce the engineering effort needed for complex agent behaviors. As models get better at planning and tool use, less scaffolding code is required.

FAQ

Do I need coding skills to create AI agents?

Not necessarily. No-code platforms like n8n, OpenAI GPT builder, and Google Vertex AI Agent Builder allow you to create agents visually. However, coding provides more flexibility for complex solutions.

What's the difference between an AI agent and a chatbot?

Chatbots respond to user input but remain passive. AI agents act autonomously, break tasks into steps, use tools, and adapt strategies to achieve goals with minimal human input.

How much does it cost to run an AI agent?

Costs depend on model usage, complexity, and integrations. API usage typically dominates costs, ranging from very small amounts per request to higher costs for complex reasoning. Infrastructure adds to the total.

Which framework should I choose for building agents?

LangChain is widely used for general-purpose agents. LlamaIndex is strong for knowledge retrieval, Semantic Kernel fits Microsoft ecosystems, and AutoGPT is suited for experimental autonomous workflows.

How do I prevent my agent from taking unwanted actions?

Use safeguards like clear instructions, input validation, limited permissions, approval workflows, rate limiting, and logging. Thorough testing helps identify risks before deployment.

Can agents work with proprietary company data?

Yes. Using retrieval-augmented generation (RAG), agents can securely access indexed company data while respecting access controls and permissions.

How long does it take to build a functional AI agent?

Simple agents can be built in hours using no-code tools. More advanced solutions typically take weeks, while enterprise systems may require months depending on complexity and integrations.

Conclusion

Creating effective AI agents combines foundation models, thoughtful architecture, appropriate tooling, and iterative refinement. The technology has matured beyond experimentation into production viability for many use cases.

Success comes from starting focused, testing with real scenarios early, and building toward complexity gradually. Both no-code platforms and programming frameworks provide viable paths depending on requirements and available expertise.

The agent ecosystem continues rapid evolution. Standards are emerging. Capabilities expand. Costs decline. Now represents an opportune moment to begin building.

Start small. Define a specific problem where autonomous action provides clear value. Build a minimal prototype. Test with real users. Iterate based on feedback. Expand scope as core functionality proves reliable.

The shift from passive AI assistants to proactive agents changes what's possible. Organizations that master agent development gain substantial competitive advantages. The question isn't whether to build agents, but which problems to tackle first.