How Do AI Agents Work? The Complete Guide (2026)

Quick Summary: AI agents are autonomous software systems that use artificial intelligence to perceive their environment, make decisions, and complete tasks with minimal human intervention. Unlike chatbots that respond to prompts, AI agents can plan multi-step workflows, use tools, learn from experience, and pursue goals independently—making them capable of handling complex business processes from customer service to data analysis.

The shift from generative AI to agentic AI represents one of the most significant leaps in artificial intelligence development. Where large language models respond to prompts and generate content, AI agents actually do things.

They don't just answer questions about scheduling. They look at your calendar, check availability across multiple attendees, book the conference room, and send meeting invites.

But how do these systems actually work? What's happening under the hood when an AI agent autonomously completes a complex task?

This guide breaks down the architecture, reasoning paradigms, and operational mechanisms that power modern AI agents.

What Makes AI Agents Different from Traditional AI

AI agents represent a fundamental shift in how artificial intelligence systems operate. Traditional AI tools—even sophisticated ones like ChatGPT—are reactive. You ask, they answer. The interaction ends.

According to MIT Sloan research, AI agents are semi- or fully autonomous systems able to perceive, reason, and act on their own. They're proactive rather than reactive.

Here's what sets them apart:

- Autonomy: Agents can pursue goals without constant human guidance. Once given an objective, they determine how to achieve it.

- Environmental awareness: They perceive and interact with their environment—whether that's a database, web browser, or physical space through robotics.

- Goal-oriented behavior: Instead of responding to individual queries, agents work toward defined objectives that may require multiple steps.

- Tool use: They can access and manipulate external tools, APIs, databases, and software systems to complete tasks.

The National Institute of Standards and Technology (NIST) launched the AI Agent Standards Initiative in February 2026 to establish frameworks for interoperable and secure agentic systems, recognizing their growing importance in the AI ecosystem.

|

Capability |

Traditional AI/Chatbots |

AI Agents |

|---|---|---|

|

Interaction Model |

Reactive (responds to prompts) |

Proactive (pursues goals) |

|

Task Scope |

Single-turn or conversation |

Multi-step workflows |

|

Tool Access |

Limited or none |

Extensive (APIs, databases, software) |

|

Decision Making |

Response generation |

Planning and reasoning |

|

Memory |

Conversation context only |

Long-term memory across sessions |

|

Autonomy |

None (waits for input) |

High (acts independently) |

The Core Architecture: How AI Agents Actually Work

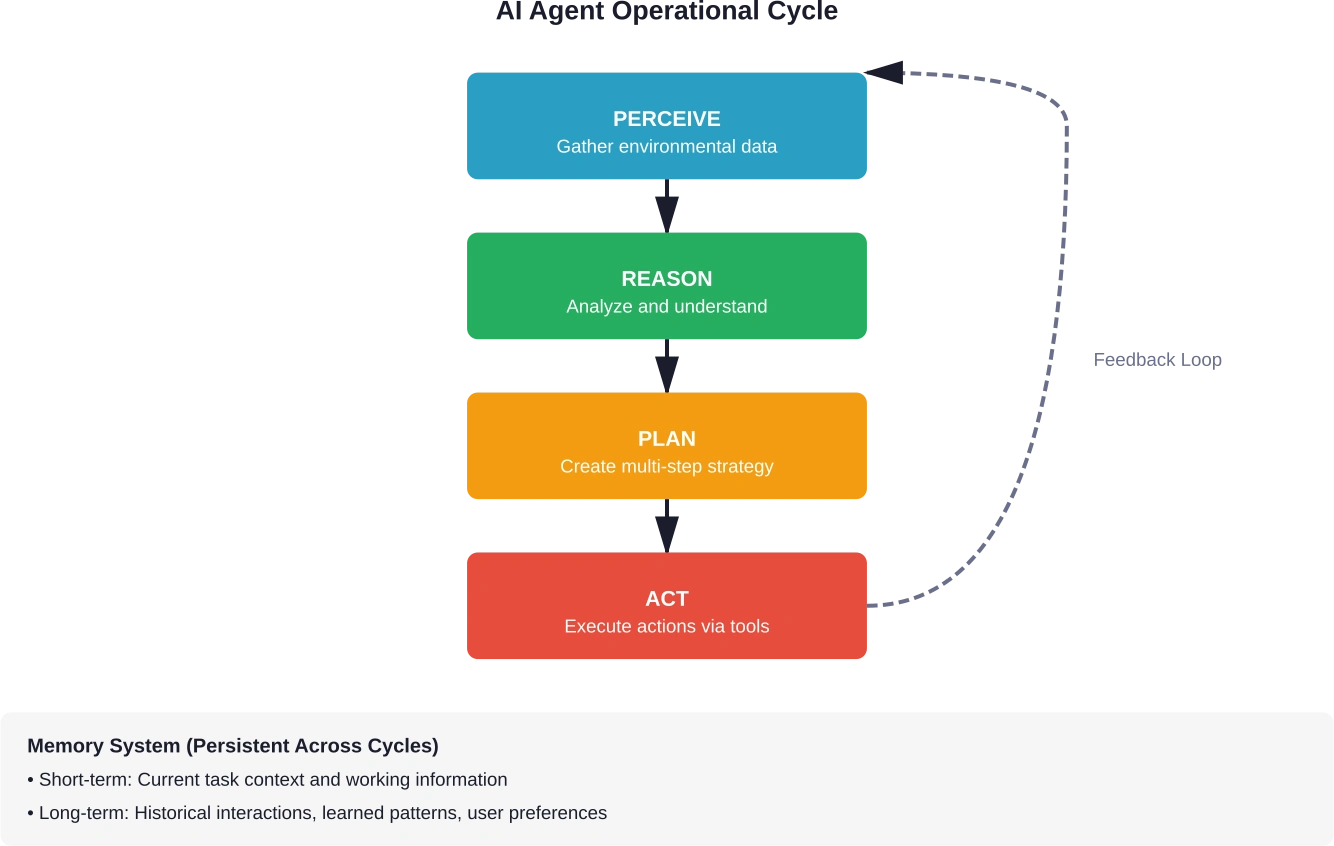

AI agents operate through a sophisticated architecture that combines perception, reasoning, planning, and action. Understanding this architecture reveals how these systems achieve autonomous behavior.

Perception: Gathering Information from the Environment

The first step in any agent's operation is perception. Agents must understand their environment before they can act on it.

Modern AI agents use foundation models—particularly multimodal ones—to process diverse inputs. According to Google DeepMind's Gemini Robotics 1.5 announcement from September 2025, advanced agents can now perceive, plan, think, use tools, and act to solve complex, multi-step tasks.

Perception mechanisms include:

- Natural language processing: Understanding text commands, documents, emails, and conversations

- Vision capabilities: Analyzing images, screenshots, UI elements, and physical environments

- Data parsing: Reading structured data from APIs, databases, and file systems

- Sensor input: For robotic agents, processing camera feeds, lidar, and other sensors

The multimodal capacity of modern foundation models enables agents to process information across formats simultaneously—reading a document while analyzing accompanying charts, for example.

Reasoning: Making Sense of Information

Once an agent perceives its environment, it must reason about what that information means and what actions might be appropriate.

Reasoning in AI agents typically involves:

- Contextual understanding: Interpreting information within the broader context of the goal and environment.

- Inference: Drawing conclusions from available data, filling gaps through logical deduction.

- Constraint recognition: Identifying limitations, rules, and boundaries that govern possible actions.

Research published on arXiv on January 27, 2026 (arXiv:2601.19752) describes agentic design patterns using system-theoretic frameworks, highlighting how agents structure reasoning processes to maintain coherence across complex decision chains.

The reasoning component determines whether the agent has enough information to proceed, what additional data it needs, and which potential actions align with its goals.

Planning: Breaking Down Complex Goals

Planning is where agents demonstrate their sophistication. Instead of executing single actions, they create multi-step workflows to achieve objectives.

Agent planning involves:

- Goal decomposition: Breaking high-level objectives into manageable subtasks

- Sequencing: Determining the optimal order for executing steps

- Resource allocation: Deciding which tools and systems to use for each step

- Contingency planning: Preparing alternative approaches if initial plans fail

As discussed in AI communities, agents don't just take help from large language models—they use LLMs as reasoning engines to generate and evaluate plans dynamically.

Planning isn't static. Agents continuously reassess their plans based on feedback from the environment, adjusting strategies when circumstances change.

Memory: Learning and Context Retention

Memory systems distinguish sophisticated agents from simple automation. Agents maintain both short-term and long-term memory to improve performance over time.

Short-term memory holds working information for the current task—the context needed to complete ongoing workflows. This might include intermediate results, tool outputs, or conversation history relevant to the immediate goal.

Long-term memory persists across sessions. It stores learned patterns, user preferences, successful strategies, and historical interactions. This enables agents to improve through experience.

According to arXiv research on agent design patterns from the Agent Design Pattern Catalogue (May 2024), memory architectures often include vector databases that enable semantic search across past experiences, allowing agents to retrieve relevant historical information when facing similar situations.

Action: Executing Tasks Through Tool Use

The final component is action—where agents actually do things in the world.

Actions are executed through tool use. Modern agents can interact with:

- APIs and web services

- Database systems for reading and writing data

- Software applications through UI automation

- Code execution environments

- Communication platforms (email, messaging, notifications)

- Physical actuators in robotic systems

Google DeepMind's Project Mariner, a research prototype exploring human-agent interaction, demonstrates how agents can automate multiple tasks simultaneously in browsers—handling research, planning, and data entry through natural language instructions.

Tool use isn't hardcoded. Agents receive tool descriptions (often in natural language or structured formats) and learn to invoke them appropriately based on their reasoning about which tools will help achieve current goals.

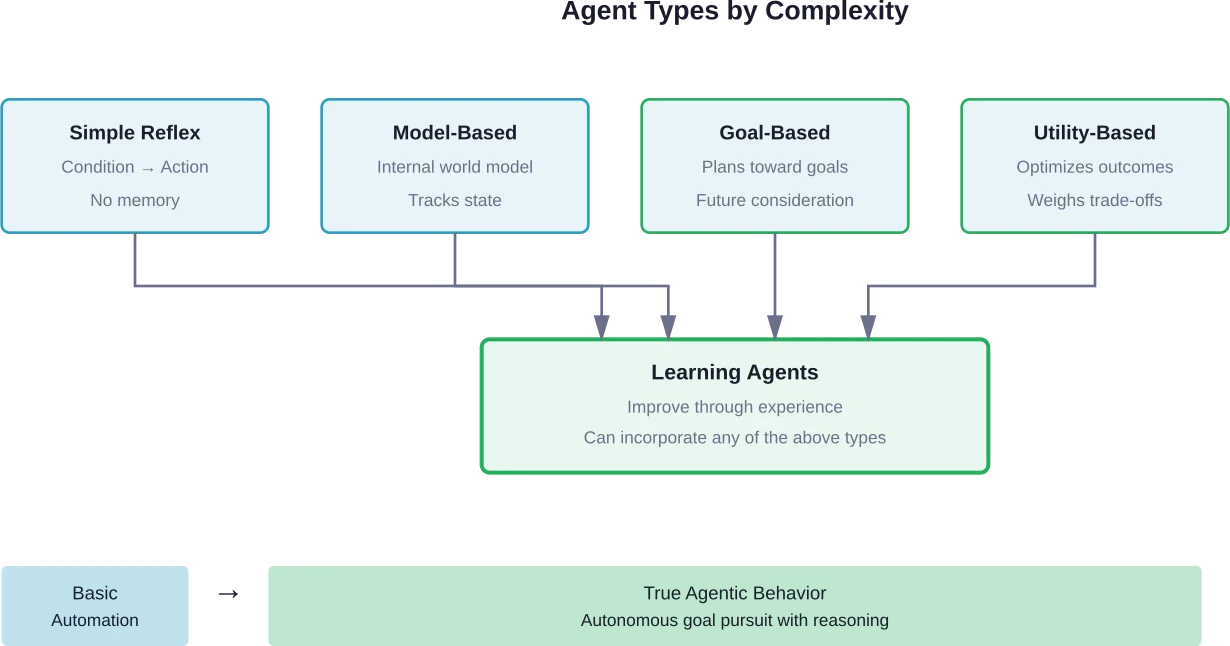

Types of AI Agents and Their Operational Differences

Not all agents work the same way. Different agent types employ distinct operational approaches based on their design and purpose.

Simple Reflex Agents

These basic agents operate on condition-action rules. They perceive the current state and select actions based on matching rules, without considering history or future consequences.

Simple reflex agents work well in fully observable environments where the correct action depends only on current perception. Think of a thermostat that turns heating on when temperature drops below a threshold.

Model-Based Agents

Model-based agents maintain an internal model of how their environment works. This allows them to handle partially observable environments where current perception doesn't reveal everything.

They update their internal model based on actions taken and new perceptions, enabling more sophisticated decision-making even when information is incomplete.

Goal-Based Agents

Goal-based agents explicitly represent desired outcomes and use planning to determine action sequences that achieve those goals. They can consider future consequences of actions.

These agents answer the question: "Will this action help me reach my goal?" They're more flexible than reflex agents because they can adapt to new goals without reprogramming their rules.

Utility-Based Agents

Utility-based agents don't just achieve goals—they optimize for the best way to achieve them. They assign utility values to different states and choose actions that maximize expected utility.

When multiple action sequences could achieve a goal, utility-based agents select the most efficient, cost-effective, or otherwise optimal approach.

Learning Agents

Learning agents improve their performance through experience. According to IEEE research on autonomous agents using reinforcement learning, these systems use feedback mechanisms to adjust their behavior based on outcomes.

They combine a learning element (which improves performance), a performance element (which selects actions), a critic (which provides feedback), and a problem generator (which suggests exploratory actions to improve learning).

Foundation Models: The Engine Behind Modern AI Agents

The recent explosion in capable AI agents owes much to foundation models—particularly large language models trained on vast datasets.

Foundation models provide agents with several critical capabilities:

- Natural language understanding: Agents can interpret complex instructions, understand nuanced requests, and process unstructured text data.

- Reasoning capabilities: LLMs can perform logical deduction, break down complex problems, and generate step-by-step solutions.

- Code generation: Many agents can write and execute code to solve computational problems or automate tasks.

- Contextual learning: Foundation models understand context across long documents and conversations, maintaining coherence in complex interactions.

The multimodal capacity of modern foundation models matters tremendously. According to research from MIT Sloan, in areas involving substantial option evaluation—like startup funding, college admissions, or B2B procurement—agents deliver value by reading reviews, analyzing metrics, and comparing attributes across options.

This requires processing text, numbers, images, and structured data simultaneously, which multimodal foundation models enable.

How Agents Use Foundation Models Differently Than Chatbots

Chatbots use foundation models to generate responses. Agents use them as reasoning engines.

When an agent needs to decide which tool to use, it might query the foundation model: "Given goal X and available tools Y, which tool should I use next?" The model generates reasoning about tool selection rather than content for a user.

Agents also use foundation models for:

- Generating plans by describing step-by-step approaches

- Evaluating outcomes by analyzing results against goals

- Adapting strategies when initial approaches fail

- Extracting information from unstructured sources

The foundation model becomes infrastructure rather than interface—a cognitive engine that powers decision-making rather than the end product users interact with.

Reasoning Paradigms That Power Agent Decisions

Different reasoning approaches enable agents to tackle different types of problems. Understanding these paradigms reveals how agents adapt their thinking to various challenges.

Chain-of-Thought Reasoning

Chain-of-thought reasoning breaks problems into sequential steps. Instead of jumping directly to an answer, the agent explicitly works through intermediate steps.

This approach improves performance on complex reasoning tasks by making the problem-solving process transparent and verifiable. If reasoning goes wrong, examining the chain reveals where errors occurred.

Tree-of-Thought Reasoning

Tree-of-thought extends chain-of-thought by exploring multiple reasoning paths simultaneously. Instead of following a single chain, the agent branches into different approaches and evaluates which paths look most promising.

This enables agents to backtrack from dead ends and explore alternative strategies without committing prematurely to a single approach.

Reflection and Self-Critique

Advanced agents can critique their own outputs. After generating a plan or taking actions, they evaluate the quality of their work and identify potential improvements.

According to Google DeepMind research on collective reasoning from November 2025, alignment between AI reasoning and human social reasoning becomes critical as agents increasingly augment collective decision-making.

Reflection mechanisms help agents catch errors, identify edge cases they haven't considered, and refine strategies before execution.

Multi-Agent Reasoning

Some systems employ multiple agents that reason collaboratively. One agent might generate proposals while another critiques them. Or specialized agents handle different aspects of a complex task.

Multi-agent architectures can improve robustness by introducing diverse perspectives into the reasoning process, similar to how human teams benefit from varied expertise.

Real-World Agent Architectures and Design Patterns

Practical AI agents implement specific architectural patterns that have proven effective across applications.

ReAct: Reasoning and Acting in Interleaved Steps

The ReAct pattern alternates between reasoning and action. The agent reasons about what to do next, takes an action, observes the result, reasons about that result, and continues.

This tight coupling between thought and action allows agents to adapt dynamically based on real feedback rather than executing entire plans before observing outcomes.

Plan-and-Execute Architecture

Plan-and-execute agents separate planning from execution. They first generate a complete plan, then execute it step by step.

This architecture works well when planning benefits from considering the entire task upfront, though it's less adaptive than ReAct when unexpected situations arise during execution.

Agentic RAG (Retrieval-Augmented Generation)

Agentic RAG extends traditional retrieval-augmented generation by giving agents control over when and what to retrieve. Instead of retrieving once at query time, agents can iteratively search for information as needs emerge during task execution.

This proves particularly valuable for research-intensive tasks where information needs become clear only through exploration.

Control Plane as a Tool

According to arXiv research on 'Control Plane as a Tool: A Scalable Design Pattern for Agentic AI Systems', the control plane pattern treats orchestration infrastructure itself as a tool available to agents. Agents can invoke deployment systems, monitoring platforms, or configuration management as part of their workflow.

This pattern enables agents to operate at infrastructure scale, managing complex distributed systems autonomously.

|

Design Pattern |

Best For |

Key Advantage |

Limitation |

|---|---|---|---|

|

ReAct |

Dynamic environments |

Highly adaptive |

Can be inefficient |

|

Plan-and-Execute |

Predictable workflows |

Efficient execution |

Less flexible |

|

Agentic RAG |

Research-heavy tasks |

Comprehensive information |

Slower response |

|

Multi-agent |

Complex domains |

Specialized expertise |

Coordination overhead |

|

Control Plane |

Infrastructure management |

System-level automation |

Requires robust tools |

Challenges and Limitations of Current AI Agents

Despite impressive capabilities, AI agents face significant challenges that constrain their reliability and applicability.

Reliability and Error Propagation

Multi-step agent workflows compound error rates. If each step has a 95% success rate, a ten-step workflow drops to roughly 60% reliability.

Errors in early steps propagate through subsequent actions, potentially causing cascading failures. An agent that misreads data in step one might base all subsequent reasoning on faulty information.

Context Window Limitations

Foundation models have finite context windows. While these have grown substantially—some models now handle 100,000+ tokens—complex agent workflows can still exceed available context.

When agents exhaust context windows, they lose information about earlier steps, potentially forgetting critical details needed for later decisions.

Cost and Latency

Agents make numerous foundation model calls during complex workflows. Each reasoning step, tool selection decision, and output evaluation requires model inference.

This creates both cost concerns (API calls add up quickly) and latency issues (multi-step workflows take time). Real-time applications struggle with these constraints.

Security and Safety

Autonomous agents with tool access create security surfaces. An agent that can send emails or modify databases could cause significant damage if compromised or misaligned.

Google DeepMind's CodeMender research (October 6, 2025) addresses security specifically, developing agents that automatically fix software vulnerabilities. Over the past six months, CodeMender has upstreamed 72 security fixes to open source projects—but this also illustrates that security remains a primary concern in agent deployment.

The NIST AI Agent Standards Initiative directly addresses these concerns, working to establish frameworks for secure, trustworthy agentic systems.

Evaluation Difficulty

Measuring agent performance proves challenging. Unlike classification tasks with clear accuracy metrics, agent success depends on subjective goal achievement in complex environments.

Did the agent complete the task correctly? Did it use resources efficiently? Would a human have taken the same approach? These questions lack straightforward answers.

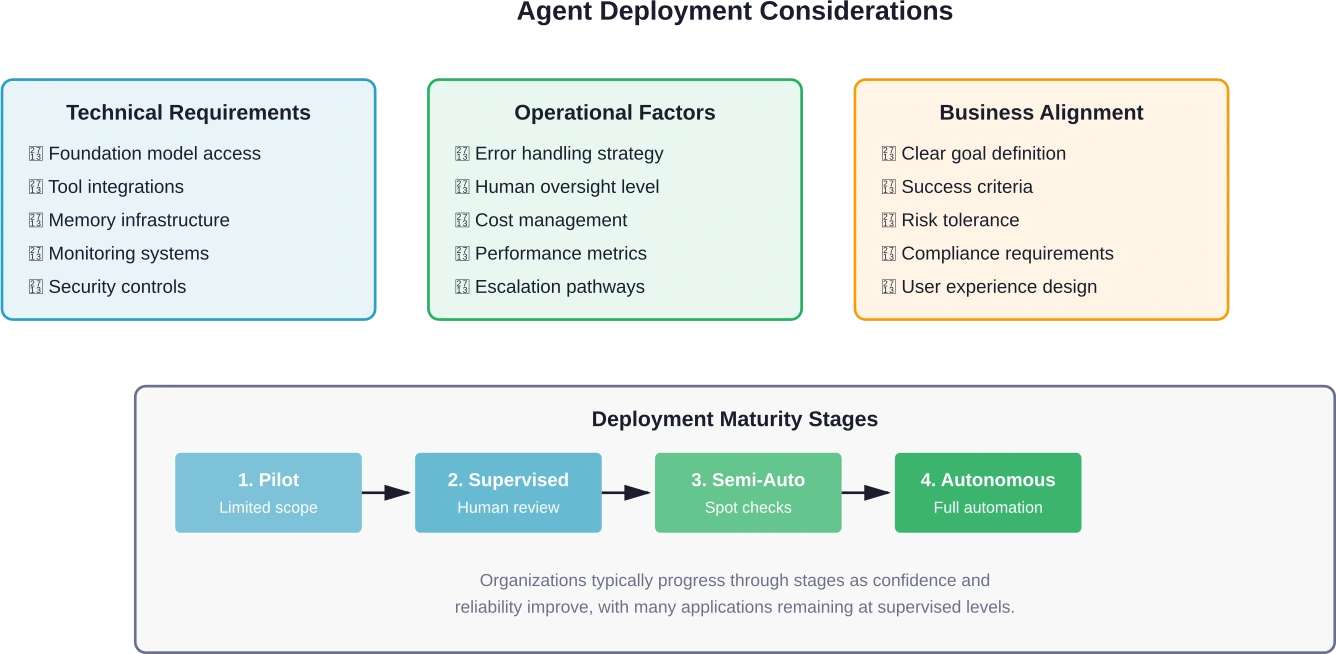

From Theory to Practice: How Businesses Deploy AI Agents

Real-world agent deployments provide insights into how these systems move from concept to production.

Customer Service Agents

Customer service represents one of the most common agent applications. These agents don't just answer questions—they resolve issues by accessing order systems, processing refunds, updating accounts, and escalating to humans when necessary.

They perceive customer problems through natural language, reason about appropriate solutions, plan resolution steps, and act through integration with business systems.

Data Analysis Agents

Data analysis agents autonomously explore datasets, generate insights, and create visualizations. Given a high-level question, they determine relevant data sources, write queries, analyze results, and present findings.

These agents use code generation capabilities extensively, writing SQL queries or Python scripts to manipulate data programmatically.

Software Development Agents

Development agents assist with coding tasks—writing functions, debugging issues, refactoring code, and writing tests. Some handle end-to-end feature implementation from specification to deployment.

They interact with version control systems, CI/CD pipelines, testing frameworks, and documentation as tools to complete development workflows.

Research and Analysis Agents

Research agents gather information across sources, synthesize findings, identify patterns, and generate reports. They're valuable for market research, competitive analysis, and literature reviews.

MIT Sloan research notes these agents excel when evaluating numerous options against multiple criteria—scenarios where human effort would be prohibitively time-consuming.

Infrastructure Management Agents

Infrastructure agents monitor systems, respond to alerts, optimize resource allocation, and implement remediation actions. They pursue goals like "maintain 99.9% uptime" or "optimize cloud costs" autonomously.

These agents demonstrate how agentic AI extends beyond knowledge work into operational domains.

Turn AI Agent Logic Into Practical Implementation

Understanding how AI agents work is one thing. Applying that logic inside existing systems, with real data and integrations, is where most projects slow down.

OSKI Solutions focuses on building and integrating AI solutions as part of custom software development. They work with .NET, Node.js, and Python to connect AI with systems like CRM, ERP, and other business tools through APIs, while also supporting cloud environments on Azure and AWS. Their work often involves extending existing applications and updating legacy systems to support new functionality.

If you’re planning to implement AI based on these concepts, contact OSKI Solutions to review how it can be applied in your setup.

See How AI Agents Work

Explore how AI agents process information, make decisions, and execute tasks across real-world workflows.

The Future: Where AI Agent Technology Is Heading

Agent capabilities are advancing rapidly. Several trends point toward what's coming next.

Physical World Integration

Agents are moving beyond digital environments. Google DeepMind's Gemini Robotics 1.5, announced in September 2025, brings AI agents into physical reality—enabling robots to perceive, plan, and act in the physical world to solve complex tasks.

This represents a significant leap from software agents to embodied intelligence that can manipulate objects, navigate spaces, and interact with physical environments.

Improved Multi-Agent Collaboration

Future systems will feature better coordination among multiple specialized agents. Instead of monolithic agents trying to handle everything, teams of focused agents will collaborate on complex tasks.

This mirrors human organizational structures—different specialists working together toward shared goals.

Enhanced Reasoning Capabilities

As foundation models improve, agent reasoning will become more sophisticated. Better logical deduction, causal reasoning, and common-sense understanding will enable agents to handle increasingly complex scenarios.

DeepMind research from December, 2016 on 'Interaction Networks for Learning about Objects, Relations and Physics' presaged current capabilities—future advances will likely build on similar principles at greater scale.

Standardization and Interoperability

The NIST AI Agent Standards Initiative, announced in February 2026, aims to ensure the next generation of AI functions securely, gains user trust, and interoperates smoothly across the digital ecosystem.

Standardization will enable agents from different vendors to work together, share tools, and coordinate actions—creating an ecosystem rather than isolated implementations.

Better Human-Agent Interfaces

The interaction paradigm between humans and agents continues evolving. Project Mariner explores natural language task assignment where agents handle time-consuming work while humans provide high-level direction.

Future interfaces will likely offer better visibility into agent reasoning, more intuitive control mechanisms, and clearer ways to specify goals and constraints.

Frequently Asked Questions

What's the difference between an AI agent and a chatbot?

Chatbots react to prompts and generate responses. AI agents act autonomously toward goals—planning, using tools, and executing multi-step workflows without constant human input.

Do AI agents actually understand what they're doing?

AI agents do not have true human understanding or consciousness. They operate using statistical models, but they can demonstrate functional understanding by reasoning, adapting, and completing tasks effectively.

Can AI agents learn from their mistakes?

Some agents improve through feedback and reinforcement mechanisms. However, many systems only adapt within a session unless supported by long-term memory or retraining processes.

What prevents AI agents from making dangerous mistakes?

Safety measures include limited tool access, human approval loops, sandboxed environments, monitoring systems, and carefully defined task boundaries.

How much do AI agents cost to run?

Costs depend on complexity and usage. Simple tasks may cost cents, while multi-step workflows involving many model calls can cost dollars per execution.

Can small businesses use AI agents or are they only for enterprises?

AI agents are accessible to businesses of all sizes through cloud platforms and no-code tools. However, successful use still requires clear goals and proper setup.

Will AI agents replace human jobs?

AI agents automate tasks rather than entire roles. They free humans to focus on strategy, creativity, and complex decision-making instead of repetitive work.

Conclusion: Understanding the Mechanics Behind Autonomous AI

AI agents represent a fundamental evolution in artificial intelligence—from systems that respond to prompts to ones that autonomously pursue goals. They work through a sophisticated architecture combining perception, reasoning, planning, memory, and action execution.

Foundation models provide the cognitive engine that powers agent reasoning and decision-making. Different agent types employ distinct operational approaches, from simple reflex behavior to sophisticated learning systems. Design patterns like ReAct, plan-and-execute, and agentic RAG structure how agents approach complex tasks.

Real-world deployments in customer service, data analysis, software development, and infrastructure management demonstrate agents' practical value. But significant challenges remain around reliability, security, cost, and evaluation.

The technology is maturing rapidly. Physical world integration, improved reasoning, better multi-agent collaboration, and emerging standards point toward increasingly capable systems.

Understanding how agents work—their architecture, reasoning processes, and operational patterns—prepares organizations and individuals to effectively deploy and work alongside these systems as they become integral to business operations and daily work.

The agentic AI era isn't coming. It's here. The question now is how effectively we'll harness these autonomous systems to augment human capabilities.