AI Agents Enterprise News 2026: Standards and Deployment

Quick Summary: AI agents are transforming enterprise operations in 2026, with major developments including NVIDIA's open Agent Toolkit, NIST's new security standards initiative, and widespread production deployments across finance, coding, and knowledge work. While 85% of companies experiment with agentic AI, security challenges around agent identity and interoperability remain critical barriers to scaling these autonomous systems.

The enterprise AI landscape shifted dramatically in early 2026. Autonomous agents moved from experimental prototypes to production systems handling real business workflows.

But here's the thing—this transition hasn't been smooth.

While companies rush to deploy AI agents across everything from financial analysis to code generation, critical gaps in security standards, interoperability, and evaluation frameworks are becoming painfully obvious. The gap between proof-of-concept success and production readiness remains substantial.

NVIDIA Launches Open Agent Development Platform

On March 16, 2026, NVIDIA announced the Agent Toolkit, positioning it as the foundation for what they're calling "the next industrial revolution in knowledge work."

The toolkit includes NVIDIA OpenShell, an open source runtime specifically designed for building self-evolving agents with enhanced safety and security features. Built with LangChain, the platform introduces the AI-Q Blueprint—a hybrid architecture that uses frontier models for orchestration while leveraging NVIDIA Nemotron open models for research tasks.

The cost implications are significant. According to NVIDIA's announcement, the AI-Q hybrid architecture can cut query costs by more than 50% while providing world-class accuracy. The system includes built-in evaluation mechanisms that explain how each AI answer is produced, addressing one of the transparency concerns that has plagued earlier agentic implementations.

Real talk: This matters because most enterprise AI projects have failed not due to accuracy issues, but because of unpredictable costs and lack of explainability. NVIDIA's approach directly tackles both problems.

NIST Establishes AI Agent Standards Initiative

The federal government entered the AI agent conversation with authority on February 17, 2026, when the National Institute of Standards and Technology (NIST) announced its AI Agent Standards Initiative.

The initiative focuses on three critical pillars: trust, interoperability, and security across what NIST calls the "agentic frontier." According to the official announcement, the goal is ensuring that the next generation of AI can function securely on behalf of users and interoperate smoothly across the digital ecosystem.

This isn't just bureaucratic posturing. Without standardized protocols, enterprise agents built on different frameworks can't communicate effectively. That creates vendor lock-in and limits the autonomous collaboration that makes agentic AI powerful in the first place.

The timing is deliberate. As agents gain access to sensitive systems and make autonomous decisions affecting business operations, regulatory frameworks need to catch up with technical capabilities.

Enterprise Adoption Reality Check

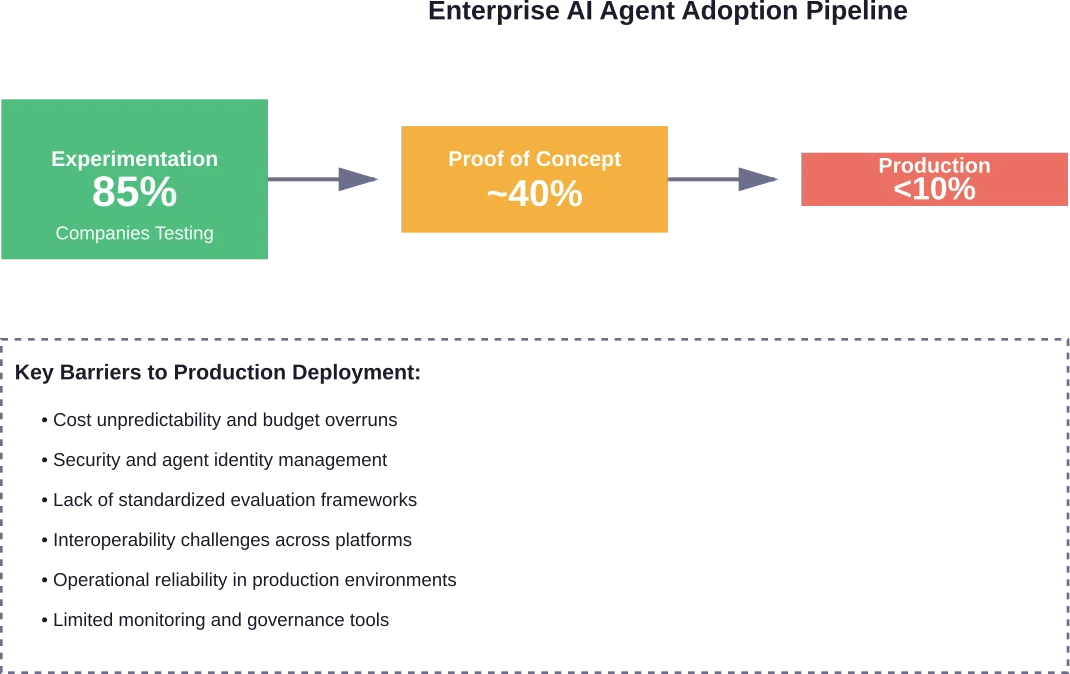

The deployment numbers tell a complex story. Research from arxiv.org reveals that while 85% of companies experiment with generative AI, only a small fraction successfully deploy agents in production. Most projects get abandoned after proof-of-concept stages.

Why the disconnect?

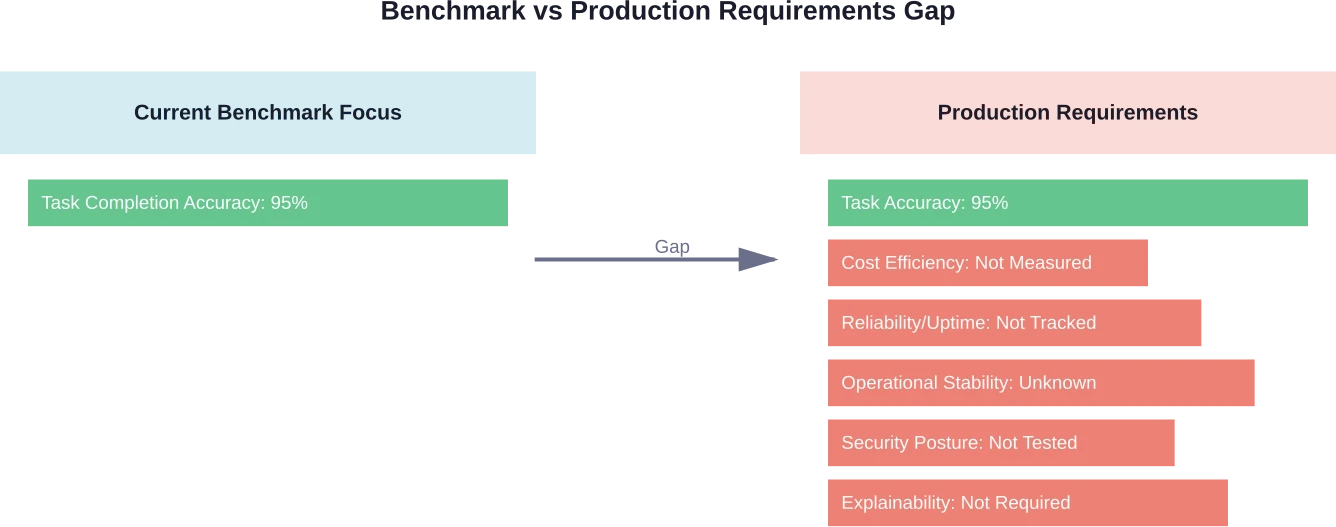

Systematic analysis across enterprise deployments identified three fundamental limitations in current approaches. First, benchmark evaluations focus almost exclusively on task completion accuracy while ignoring critical requirements like cost-efficiency, reliability, and operational stability.

Second, the gap between controlled testing environments and messy production realities proves larger than anticipated. Agents that perform well on standardized benchmarks often struggle with the variability and edge cases inherent in real business workflows.

Third, enterprises lack frameworks for continuous monitoring and evaluation once agents are deployed. Unlike traditional software where behavior is predictable, autonomous agents can evolve in unexpected ways.

Agent Identity Emerges as Critical Security Priority

As autonomous systems proliferate across enterprises, agent identity has become the next major security battleground.

The challenge is straightforward: AI agents need access to sensitive systems and data to perform their functions. But how do you authenticate, authorize, and audit actions taken by non-human actors that can spawn sub-agents, delegate tasks, and operate across multiple systems simultaneously?

Traditional identity and access management (IAM) frameworks weren't designed for this scenario. They assume relatively static permissions for human users who log in, perform tasks, and log out. Agents operate continuously, adapt their behavior, and interact with other agents in ways that create complex chains of authorization.

The security implications are substantial. An agent with excessive permissions could become a vector for data exfiltration or unauthorized system modifications. An agent without sufficient permissions can't do its job. And tracking which agent did what becomes exponentially more complex as agent ecosystems grow.

Production Deployments Span Diverse Domains

Despite challenges, agents are already operating in production across multiple industries. Research tracking real-world implementations confirms deployments extending far beyond the initial focus on coding assistance.

Financial services have been particularly aggressive adopters. Agents handle credit risk assessment, fraud detection, and financial crime monitoring. The appeal is obvious—finance involves processing enormous volumes of structured and unstructured data against complex rule sets, exactly where autonomous agents excel.

Stanford researchers developed an AI analyst that made 30 years of stock picks using only public information. The results were stunning. Between 1990 and 2020, human fund managers had generated $2.8 million of alpha, or benchmark-adjusted returns, every quarter. When the researchers' AI analyst readjusted those portfolios, it generated $17.1 million per quarter on top of the actual returns.

But financial applications also highlight the stakes. Autonomous agents making investment decisions or approving loans need robust explainability and audit trails. A "black box" agent that can't justify its reasoning won't pass regulatory scrutiny, regardless of performance.

Workforce Impact Analysis Shows Widespread Exposure

Research from Stanford examined how AI agents might reshape the U.S. workforce. The findings suggest massive potential disruption. Research indicates that around 80% of U.S. workers may see LLMs affect at least 10% of their tasks, with 19% potentially seeing over half impacted.

That said, "impact" doesn't automatically mean "replacement." The research distinguishes between automation (agents replacing human tasks entirely) and augmentation (agents enhancing human productivity). Many applications fall into the augmentation category, where agents handle routine subtasks while humans focus on higher-level decision-making.

The distinction matters for workforce planning. Organizations need strategies not just for which tasks agents can automate, but for how to restructure roles when agents handle portions of existing jobs.

Software Architecture Faces Fundamental Rethinking

The rise of agentic AI is forcing enterprise software architecture into uncharted territory. CIOs face huge challenges managing AI as it seeps deeper into the systems that fuel business operations.

Some industry observers have suggested that traditional SaaS might be "dying" as agents replace point solutions. The reality is more nuanced. SaaS isn't dying any more than on-premises software, but software is definitely in for a user experience overhaul.

Instead of humans navigating software interfaces to complete tasks, agents increasingly handle those interactions programmatically. This shifts the value proposition from "intuitive UI" to "robust API with clear documentation." Software vendors that built their moats around user experience suddenly need to compete on programmatic accessibility.

One enterprise found this shift transformative. Rather than optimize an existing forecasting solution that relied heavily on spreadsheets and enterprise systems, they rebuilt it using AI agents. The resulting solution cut forecasting errors by 50%.

Look, that's the kind of performance improvement that justifies architectural disruption.

Evaluation Frameworks Still Playing Catch-Up

Current agentic AI benchmarks predominantly evaluate task completion accuracy while overlooking critical enterprise requirements. This creates a dangerous disconnect between impressive demo performance and production viability.

A comprehensive framework analysis (CLEAR framework) identified what's missing: cost-consciousness, reliability metrics, and operational stability measures. Research demonstrates agents can show 50x cost variations for similar precision levels, and an agent that completes tasks with 95% accuracy but costs significantly more than budgeted isn't production-ready.

IBM's work deploying their Generalist Agent (CUGA) in enterprise production illustrates the challenge. In preliminary tests within simulated enterprise workflows, the agent approached the accuracy of hand-crafted agents while indicating potential substantial reductions in development time (up to 90%) and cost (up to 50%). But moving from simulation to production required extensive additional work on monitoring, error handling, and integration with existing systems.

Open Source vs Proprietary Agent Frameworks

The agent development landscape is splitting between open source and proprietary approaches, each with distinct advantages.

NVIDIA's decision to release OpenShell as open source signals a bet that standardization and community development will accelerate adoption faster than closed ecosystems. LangChain's involvement brings established open source credibility and an existing developer community.

Proprietary frameworks from major cloud providers offer tighter integration with their ecosystems and, theoretically, better support. But they also create vendor lock-in risks that enterprises are increasingly wary of.

The sweet spot for many organizations appears to be hybrid approaches—open source runtimes and frameworks for portability, integrated with proprietary services for specific capabilities like advanced language models or specialized tooling.

What Enterprise Leaders Should Focus On Now

So what's the practical takeaway for organizations navigating this rapidly evolving landscape?

First, start with clear use case identification. Don't deploy agents because it's trendy. Identify specific workflows where autonomous operation provides measurable value—high-volume repetitive tasks, complex data analysis requiring synthesis across multiple sources, or scenarios requiring 24/7 availability.

Second, invest in agent identity and access management infrastructure now. The security challenges won't get easier as agent deployments scale. Establish governance frameworks, audit capabilities, and permission models designed for non-human actors before you have dozens of agents operating across your systems.

Third, demand comprehensive evaluation frameworks from vendors and open source projects. Task completion accuracy isn't enough. Require metrics on cost per operation, failure rates, recovery behavior, and explainability of agent decisions.

Fourth, plan for workforce transition alongside technical deployment. Agent augmentation works best when humans understand what agents can and can't do reliably. That requires training, role redefinition, and often cultural shifts.

|

Implementation Phase |

Primary Focus |

Key Success Metrics |

|---|---|---|

|

Pilot (0-3 months) |

Use case validation and cost modeling |

Task completion rate, cost per operation, user feedback |

|

Limited Production (3-6 months) |

Security framework and monitoring |

Agent identity compliance, incident response time, audit coverage |

|

Scale (6-12 months) |

Interoperability and workforce integration |

Cross-platform functionality, employee productivity gains, error rates |

|

Optimization (12+ months) |

Cost efficiency and continuous improvement |

ROI, operational stability, agent evolution tracking |

Looking Forward: The Agentic Enterprise

The trajectory is clear even if the timeline remains uncertain. Enterprises are moving toward architectures where AI agents handle increasing portions of operational workflows.

The winners won't be organizations that deploy the most agents fastest. They'll be the ones that thoughtfully integrate autonomous systems with appropriate governance, security, and evaluation frameworks.

Standards initiatives like NIST's effort will shape interoperability and accelerate adoption by reducing integration complexity. Open platforms like NVIDIA's toolkit will democratize access to sophisticated agent capabilities.

But the hard work—defining what agents should do, how to measure their performance, and how to manage the security and ethical implications—that stays with individual organizations.

The agentic enterprise isn't a distant future concept anymore. It's happening now, messily and unevenly, with successes and failures that are teaching the industry what actually works beyond the hype.

Align AI Integrations With Your Existing Systems

Enterprise AI discussions often focus on standards and trends, but the real work starts when those solutions need to connect with existing systems and data.

OSKI Solutions focuses on AI integrations as part of custom software development. They work with .NET, Node.js, and Python to integrate AI into CRM, ERP, and other business systems through APIs, while also supporting cloud environments on Azure and AWS. Their projects often involve modernizing legacy systems and extending existing applications with new functionality.

If you’re planning to implement AI in an enterprise setup, it’s worth reviewing how it will integrate with your current systems with OSKI Solutions before moving forward.

Stay Ahead with AI Agent Trends

Get the latest enterprise insights, breakthroughs, and real-world use cases of AI agents shaping the future of business.

Frequently Asked Questions

What are AI agents in enterprise context?

AI agents are autonomous systems that perceive data, make decisions, and take actions to achieve goals. In enterprises, they automate workflows, analyze data, support customers, and monitor systems while adapting to changing conditions.

How much do enterprise AI agent platforms cost?

Costs vary depending on scale, provider, and usage. Most platforms use consumption-based pricing tied to API calls, compute usage, or completed tasks. Pricing models evolve frequently, so checking vendor documentation is essential.

What's the difference between agentic AI and traditional AI automation?

Traditional automation follows fixed rules, while agentic AI systems can plan, reason, and adapt their approach dynamically based on outcomes and changing conditions.

Are AI agents secure enough for enterprise deployment?

Security is still evolving. Enterprises must implement strong identity management, access control, auditing, and monitoring. Many organizations start with low-risk use cases before scaling.

Which industries are adopting AI agents fastest?

Finance, software development, and knowledge-based industries lead adoption. Use cases include fraud detection, coding automation, and advanced data analysis.

What skills do teams need to build and manage AI agents?

Teams need skills in software engineering, AI/ML concepts, prompt design, API integration, orchestration, monitoring, and governance of autonomous systems.

Can AI agents from different vendors work together?

Interoperability is still limited. While some platforms support integration through APIs, cross-vendor compatibility remains a challenge. Emerging standards aim to improve this over time.

Conclusion

The enterprise AI agent landscape in 2026 reflects both remarkable progress and substantial growing pains. Production deployments are happening across industries, major technology providers are releasing sophisticated development platforms, and government agencies are establishing necessary standards.

Yet the gap between experimentation and successful scaling remains wide. Security concerns around agent identity, lack of comprehensive evaluation frameworks, and interoperability challenges continue limiting broader adoption.

Organizations that approach AI agents with clear use cases, robust governance structures, and realistic expectations about current capabilities will be best positioned to capture value from this technology. Those chasing hype without addressing fundamental security, cost, and reliability requirements will likely join the majority of abandoned proof-of-concept projects.

The agentic enterprise is emerging—just more gradually and carefully than the most optimistic predictions suggested. And given the stakes, that measured pace might be exactly what's needed.