AI Agent Orchestration: The 2026 Guide to Multi-Agent Systems

Quick Summary: AI agent orchestration is the process of coordinating multiple specialized AI agents within a unified system to achieve complex goals that single agents cannot handle alone. According to research from arXiv, orchestrated multi-agent systems represent the next stage in AI evolution, using hierarchical frameworks where a central planner coordinates specialized sub-agents. Orchestration patterns include sequential, concurrent, group chat, and handoff approaches, each suited to different task requirements.

The promise of AI agents solving real business problems has finally arrived. But here's the thing—single agents hit their limits fast.

A customer service agent can handle inquiries. A data analysis agent can crunch numbers. But what happens when you need both working together, passing context back and forth, while a third agent manages the workflow? That's where orchestration comes in.

AI agent orchestration coordinates multiple specialized agents within a unified system to efficiently achieve shared objectives. Rather than building one massive agent that tries to do everything, orchestration lets specialized agents work together—each handling what it does best.

According to research published on arXiv, orchestrated multi-agent systems represent the next stage in AI evolution, with papers including 'The Orchestration of Multi-Agent Systems' (arXiv:2601.13671). The AgentOrchestra framework demonstrates how a central planner can orchestrate specialized sub-agents for web navigation, data analysis, and file operations, and supports continual adaptation by dynamically instantiating, retrieving, and refining tools online during execution.

Why Agent Orchestration Matters Now

Single-agent systems work fine for straightforward tasks. Classification? Summarization? A direct model call with a well-crafted prompt handles it.

Complex workflows break that model completely.

Real-world business processes involve multiple steps, different data sources, and decision points that change based on context. According to MIT Sloan Management Review research from November 2025, digital agents are rapidly becoming crucial workforce components. The research notes that AI agents currently approve low-risk invoices and facilitate overnight customer onboarding.

According to the MIT Sloan survey, traditional AI adoption has climbed to 72% over the past eight years, while generative AI achieved 70% adoption in just three years. Agentic AI adoption represents rapid growth despite most organizations lacking a comprehensive strategy in place.

Here's the key insight: orchestration isn't about making agents smarter. It's about making them work together better.

How Multi-Agent Orchestration Actually Works

At its core, orchestration manages three critical elements: task decomposition, agent coordination, and state management.

Task decomposition breaks complex goals into smaller, manageable pieces that individual agents can handle. A manager agent analyzes the overall objective and creates a task graph—essentially a roadmap of what needs to happen and in what order.

Agent coordination determines how agents communicate and pass information. Does Agent A need to finish before Agent B starts? Can they work simultaneously? What happens if Agent C encounters an error?

State management tracks where everything stands at any moment. Which tasks are complete? What data has been generated? Where are the bottlenecks?

Research on the Autonomous Manager Agent describes workflow management as a Partially Observable Stochastic Game. Their work on the Autonomous Manager Agent identifies four foundational challenges: compositional reasoning for hierarchical decomposition, multi-objective optimization under shifting preferences, coordination and planning in ad hoc teams, and governance and compliance by design.

Core Orchestration Patterns That Actually Get Used

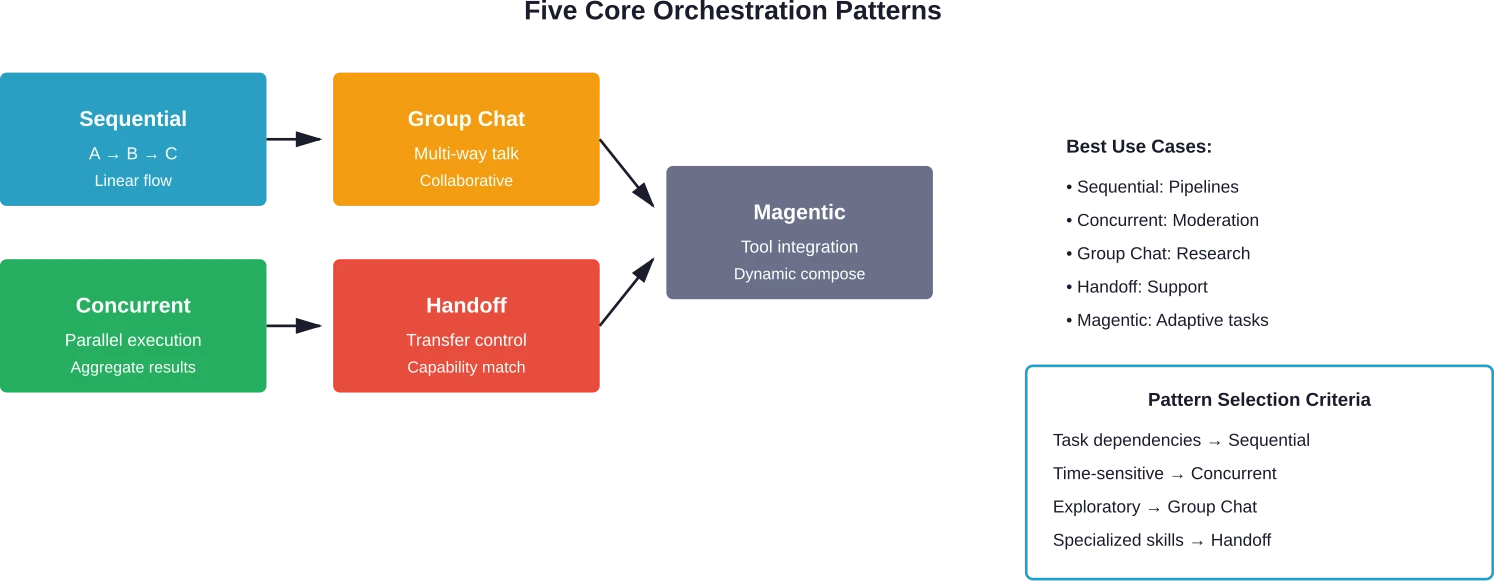

The architecture patterns for agent orchestration fall into five main categories. Each solves different problems and fits different use cases.

Sequential Orchestration

Sequential orchestration runs agents one after another in a defined order. Agent A completes its task, passes the output to Agent B, which processes it and sends results to Agent C.

This pattern works when tasks have clear dependencies. Document processing pipelines use it constantly—one agent extracts text, another classifies content, a third summarizes key points.

The downside? Sequential orchestration takes time. If Agent B waits for Agent A, and Agent C waits for Agent B, latency stacks up fast.

Concurrent Orchestration

Concurrent orchestration runs multiple agents simultaneously. All agents receive the same input and process it in parallel, then a coordinator agent aggregates the results.

This pattern shines for tasks where multiple perspectives add value. Content moderation systems often use concurrent orchestration—several agents analyze the same content for different policy violations, and a coordinator decides the final action based on all their assessments.

The challenge is managing conflicts when agents disagree.

Group Chat Orchestration

Group chat orchestration lets agents communicate directly with each other in a shared context. Instead of a rigid coordinator, agents participate in a conversation, proposing ideas, asking questions, and building on each other's contributions.

This pattern handles exploratory tasks well. When the solution path isn't clear upfront, group chat lets agents discover it collaboratively. Brainstorming agents, research assistants, and creative writing systems benefit from this flexibility.

The risk is conversation sprawl—without guardrails, agents can lose focus or get stuck in unproductive loops.

Handoff Orchestration

Handoff orchestration transfers control from one agent to another based on capability matching. When an agent realizes it's not equipped to handle the next step, it hands it off to a specialist.

Customer service systems use this constantly. A general-purpose agent handles initial triage, but when the conversation requires technical expertise or account access, it hands off to an agent with those capabilities.

The key is detecting handoff triggers accurately. Hand off too early and the first agent underperforms. Hand off too late and customer frustration builds.

Magentic Orchestration

Magentic orchestration (named after the Python library that coined the term) focuses on tool integration and dynamic composition. Agents don't just process information—they actively select and chain together tools based on task requirements.

This pattern adapts to changing needs. An agent analyzing sales data might realize it needs web scraping tools, API calls, and database queries—and orchestrate all three without a predetermined workflow.

Implementation complexity is higher, but flexibility makes it worth considering for unpredictable environments.

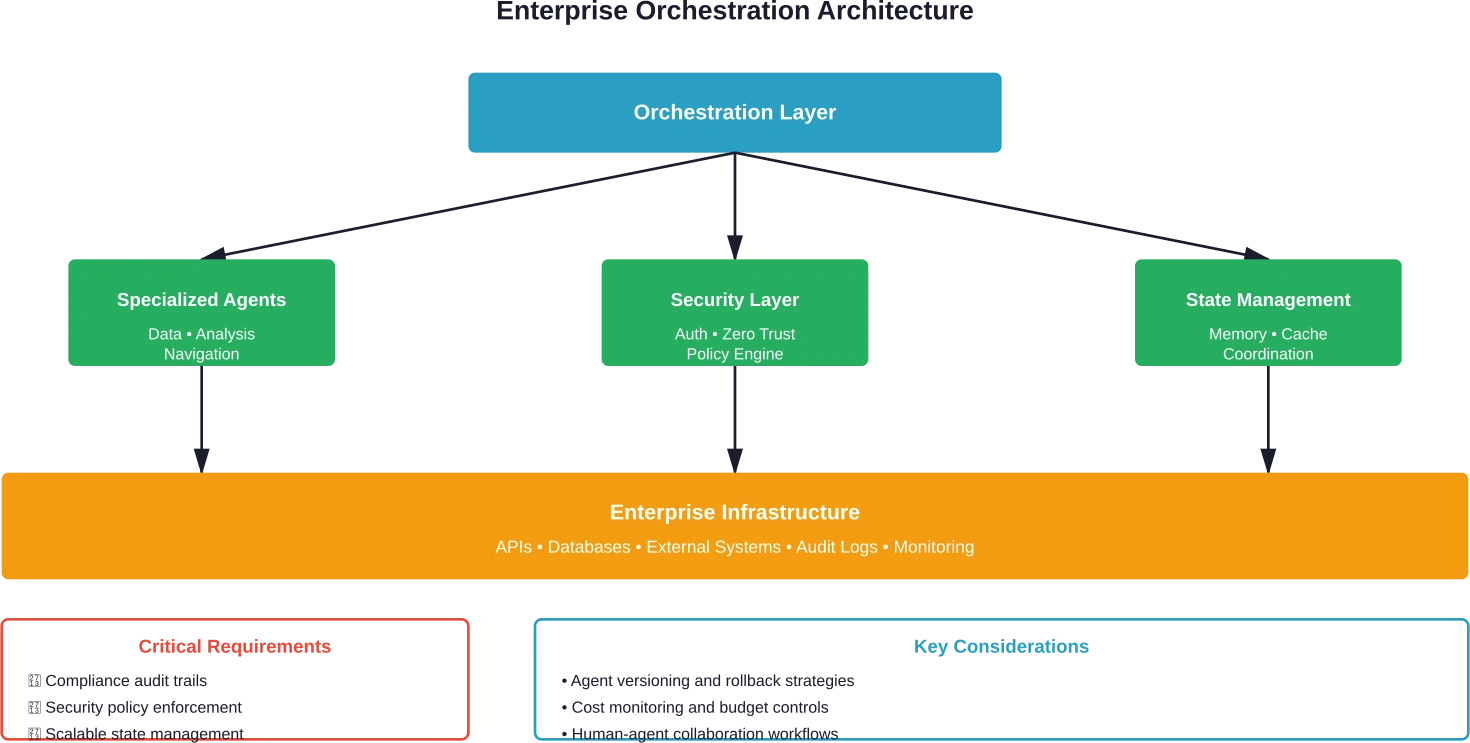

Building Blocks of Production Orchestration Systems

Production orchestration requires more than just connecting agents. Teams need infrastructure that handles state, memory, and coordination at scale.

State management tracks what's happening across the system. When Agent A finishes a task, that state change needs to propagate instantly. Redis provides primitives that give teams building blocks for reliable multi-agent coordination—Redis 8 delivers up to 87% faster command execution, up to 2x throughput improvement, and up to 35% memory savings compared to previous versions, according to Redis documentation.

Memory systems let agents recall past interactions. Without memory, agents treat every task as brand new. With it, they build context over time and improve their coordination.

Tool integration connects agents to external systems. APIs, databases, web scrapers—agents need reliable ways to interact with the world beyond their model.

Error handling determines what happens when things go wrong. Agents fail. Networks timeout. APIs return unexpected data. Robust orchestration systems handle failures gracefully without losing critical state.

Major Orchestration Platforms Compared

The orchestration platform landscape evolved rapidly through 2025 and into early 2026. Different platforms make different tradeoffs.

|

Platform |

Best For |

Key Strength |

Limitation |

|---|---|---|---|

|

LangGraph |

Custom workflows |

Graph-based flexibility |

Steeper learning curve |

|

CrewAI |

Role-based teams |

Intuitive agent roles |

Less customization |

|

AWS Bedrock |

Enterprise deployments |

AWS integration |

Vendor lock-in |

|

OpenAI Agents SDK |

Rapid prototyping |

Quick setup |

Limited patterns |

|

AutoGen |

Conversational agents |

Group chat native |

Resource intensive |

|

Azure AI Studio |

Microsoft ecosystem |

Enterprise support |

Azure dependency |

LangGraph excels at complex, custom workflows where fine-grained control matters. Developers define agents as graph nodes and orchestration logic as edges, creating sophisticated coordination patterns.

CrewAI simplifies orchestration by modeling agents as crew members with defined roles. The CEO agent delegates to specialist agents, mimicking human team structures. This intuition speeds development but constrains flexibility.

AWS Bedrock provides enterprise-grade infrastructure with native AWS service integration. Organizations already invested in AWS find the path of least resistance here, though migration becomes harder later.

The OpenAI Agents SDK prioritizes rapid prototyping and developer experience. Teams can spin up orchestrated agents quickly, but hit limitations when requirements grow complex.

Real Implementation Challenges Nobody Talks About

The marketing materials make orchestration look straightforward. The reality is messier.

Context Window Limitations

Agents accumulate context as they work. Conversation history, task results, intermediate data—it all consumes tokens. Long-running workflows hit context limits, forcing difficult decisions about what to keep and what to discard.

Some teams implement summarization agents that periodically compress context. Others use external memory systems. Both approaches add complexity and potential failure points.

Cost Explosion at Scale

Multi-agent systems multiply API costs fast. Each agent call costs money. Coordination overhead adds more calls. Complex workflows can burn through budgets quickly.

Optimization requires careful monitoring and smart caching. When does an agent really need to call the model versus using a cached response? The answer depends on context sensitivity and acceptable staleness.

Debugging Multi-Agent Interactions

When a single agent fails, debugging is relatively straightforward. When five agents coordinate and the workflow produces wrong results, finding the root cause becomes detective work.

Observability tools help, but the fundamental challenge remains—agent interactions create emergent behavior that's hard to predict and diagnose.

Ensuring Consistency Across Agents

Different agents might use different models, prompts, or tool versions. Keeping them aligned requires governance that balances consistency with flexibility.

Version control for agents is still evolving. When an agent gets updated, how does that affect downstream agents that depend on its output format?

Orchestration in Enterprise Environments

Enterprise adoption brings additional requirements beyond technical implementation.

According to research from the USC Institute for Creative Technologies Human-inspired Adaptive Teaming Systems (HATS) Lab published in September 2025, the evolution of AI has reached a critical juncture. While raw computational power continues to impress, application to truly complex, multi-faceted problems reveals limitations that orchestration addresses.

Security becomes paramount when agents access sensitive data and systems. Zero-trust architectures for multi-agent frameworks require end-to-end agent authentication and authorization, dynamic trust assessment and revocation, and declarative policy languages that encode security rules.

Research on zero-trust multi-agent frameworks explores agent authentication, authorization, dynamic trust assessment and revocation, and autoscaling policies that spin up extra authentication nodes under load.

Compliance requirements affect what agents can do and how their decisions get audited. Healthcare applications need HIPAA compliance. Financial services need SOX compliance. Orchestration systems must maintain audit trails that explain agent decisions.

Change management challenges emerge as digital agents become workforce components. Teams must rethink how work gets done and how systems are designed. Who manages agent performance? How do human workers collaborate with agent colleagues? What happens when an agent makes a mistake?

Task-Adaptive Orchestration: The Next Evolution

Research from February 2026 introduces AdaptOrch, a task-adaptive approach to multi-agent orchestration that addresses a critical shift: as large language models from diverse providers converge toward comparable benchmark performance, the traditional paradigm of selecting a single "best" model is increasingly less useful.

Instead of fixed orchestration patterns, adaptive systems analyze task characteristics and dynamically select coordination strategies. Simple classification tasks might use direct model calls. Complex reasoning tasks might require hierarchical orchestration with multiple specialized agents.

This adaptive approach reduces unnecessary complexity. Not every task needs multi-agent coordination. Adaptive orchestration applies the right level of coordination for each specific task.

Measuring Orchestration Success

How do organizations know if their orchestration works? Metrics matter.

Task completion rate measures what percentage of workflows finish successfully. But completion alone doesn't tell the full story.

Time to completion reveals efficiency. Orchestration should speed things up by parallelizing work and coordinating specialists. If orchestrated workflows take longer than single-agent approaches, something's wrong.

Resource utilization tracks costs and infrastructure usage. Efficient orchestration minimizes redundant API calls and maximizes agent reuse.

Error rates and failure modes show reliability. Which coordination patterns fail most often? Where do agents get stuck or produce incorrect results?

According to research using the MA-Gym evaluation framework, GPT-5-based Manager Agents evaluated across 20 workflows struggle to jointly optimize for goal completion, constraint adherence, and workflow runtime—underscoring workflow management as a difficult open problem.

Common Anti-Patterns to Avoid

Experience reveals what doesn't work.

Over-Orchestration

Teams sometimes add orchestration complexity unnecessarily. A simple task handled by one agent gets split across three agents with coordination overhead that adds latency and cost without meaningful benefit.

Start simple. Add orchestration when tasks genuinely require specialization or parallelization.

Unclear Agent Responsibilities

When agent roles overlap or boundaries blur, coordination becomes chaotic. Two agents might duplicate work or produce conflicting outputs.

Define clear responsibilities upfront. Each agent should have a well-scoped purpose.

Ignoring Failure Scenarios

Orchestration workflows fail in ways single agents don't. What happens when Agent B needs Agent A's output but Agent A fails? Without explicit failure handling, workflows hang or cascade errors.

Design for failure from the start. Every coordination point needs a failure path.

Premature Optimization

Optimizing orchestration before understanding workload patterns wastes time. Teams build elaborate caching systems for workflows that run once per day, or optimize latency for batch processes where throughput matters more.

Profile first, optimize second.

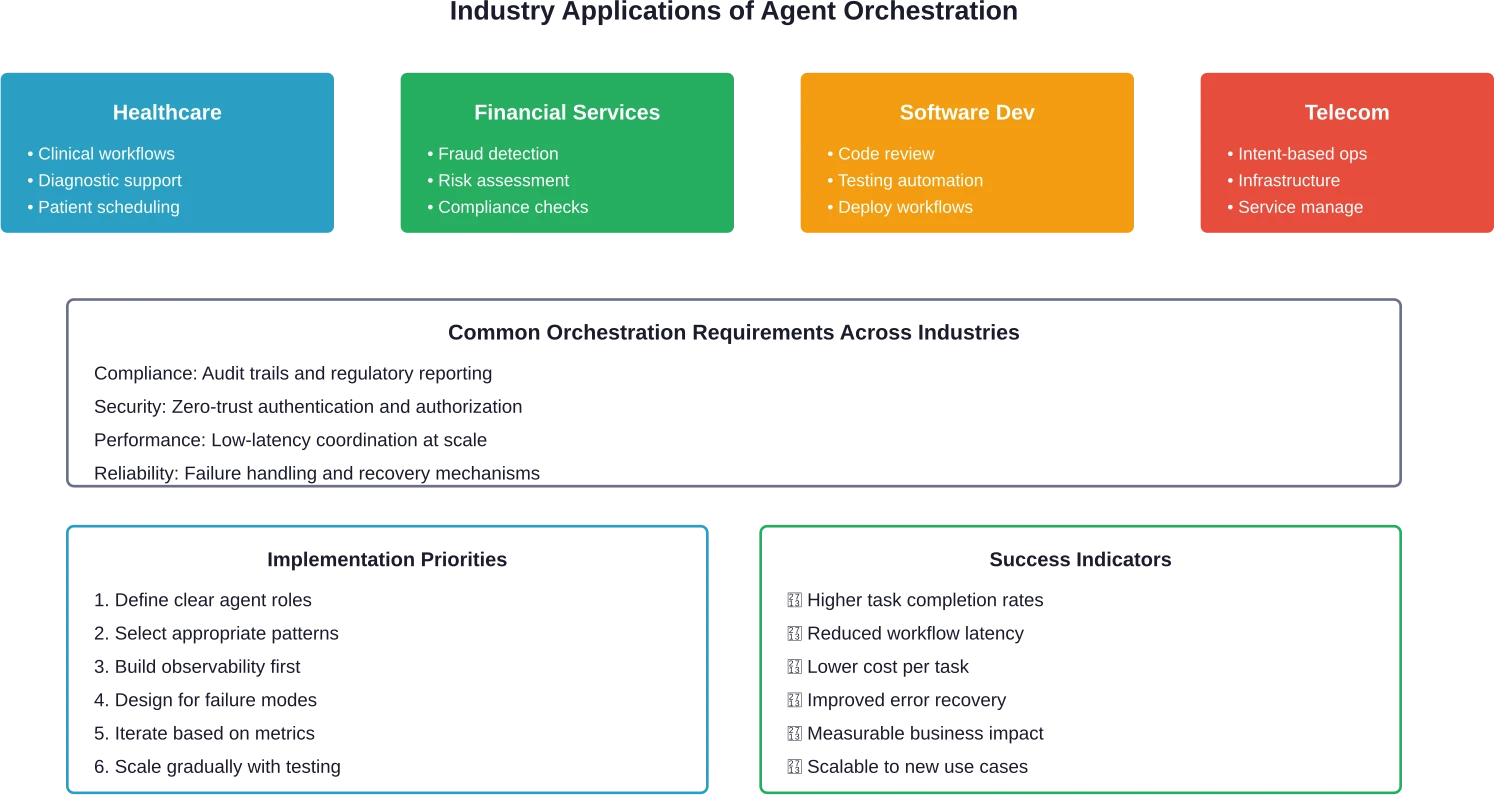

Getting Started: A Practical Path Forward

Organizations exploring orchestration should start with the right level of complexity.

Begin with a single use case that genuinely benefits from multi-agent coordination. Document processing, customer service routing, or data analysis pipelines often make good candidates.

Choose a pattern that fits the use case. Sequential for linear workflows. Concurrent for tasks with multiple independent components. Don't force a pattern because it seems sophisticated.

Pick a platform that matches current capabilities. Teams with strong Python skills might prefer LangGraph. Organizations heavily invested in AWS should consider Bedrock. Small teams needing quick wins might start with OpenAI's SDK.

Instrument thoroughly from day one. Without observability, debugging multi-agent systems becomes nearly impossible. Track agent calls, token usage, latency, and intermediate outputs.

Iterate based on real usage. Orchestration requirements become clear through practice. The first implementation will need adjustment.

|

Stage |

Focus |

Key Activities |

Success Metric |

|---|---|---|---|

|

Pilot |

Proof of concept |

Single use case, simple pattern |

Task completion |

|

Expansion |

Additional workflows |

Multiple patterns, more agents |

Cost efficiency |

|

Production |

Scale and reliability |

Error handling, monitoring |

System uptime |

|

Optimization |

Performance tuning |

Caching, parallelization |

Response time |

Build Multi-Agent Systems That Actually Work

Multi-agent setups fail when agents can’t share data, access the right systems, or stay in sync. Orchestration isn’t just about logic – it’s about integrating agents with your backend, APIs, and tools like CRM or ERP.

OSKI Solutions helps teams connect and run multiple AI agents inside real products. That includes API integration, backend development in .NET or Node.js, and setting up stable data flows so agents can operate together, not separately.

If you’re building multi-agent systems, talk to OSKI Solutions before scaling it.

AI Agent Orchestration

Coordinate multiple AI agents to work together, share data, and execute complex workflows seamlessly. Build scalable systems that automate decision-making and deliver real business results.

The Role of Evolving Orchestration

Research from May 2025 on evolving orchestration (arXiv:2505.19591, revised October 2025) introduces an interesting concept: orchestration patterns that improve over time based on performance feedback.

Rather than static coordination rules, evolving orchestration adjusts how agents collaborate based on what works. If sequential execution consistently outperforms concurrent execution for a particular task type, the system learns that preference.

This approach reduces manual tuning but requires careful monitoring to prevent optimization toward local maxima that miss better solutions.

Industry-Specific Applications

Different industries apply orchestration differently.

Healthcare systems use orchestration for clinical workflow management. Research from IEEE on autonomous agentic AI for clinical workflows demonstrates self-managing healthcare operations where agents handle appointment scheduling, diagnostic support, and treatment coordination.

Financial services deploy orchestration for fraud detection and risk assessment. Multiple specialized agents analyze different risk factors concurrently, and a coordinator agent makes final decisions based on aggregated analysis.

Software development teams use orchestration for automated testing, code review, and deployment workflows. Agents handle different aspects of the development lifecycle, coordinating through defined handoff points.

Telecommunications and infrastructure management apply orchestration for intent-based operations. According to IEEE research, agentic AI coordinates infrastructure and service orchestration based on high-level intent specifications rather than low-level configuration.

Future Directions in Agent Orchestration

The field continues evolving rapidly. Several trends merit attention.

Standardization efforts aim to create interoperable orchestration protocols. The Tool-Environment-Agent (TEA) Protocol developed by research teams provides one approach to standardizing how agents interact with tools and environments.

Performance convergence among base models shifts focus from model selection to orchestration quality. When GPT, Claude, and other models perform similarly on benchmarks, the differentiator becomes how well agents coordinate rather than which model powers them.

Human-AI team orchestration explores how manager agents coordinate mixed teams of human and AI workers. This represents a harder challenge than pure AI orchestration because human availability, preferences, and capabilities vary in ways AI agents' don't.

Agentic web concepts suggest that internet infrastructure itself might evolve to prioritize agent interactions. According to IEEE Spectrum analysis published October 13, 2025, AI agents will increasingly redefine internet interactions, bringing both convenience and new security considerations.

Frequently Asked Questions

What's the difference between AI orchestration and AI agent orchestration?

AI orchestration coordinates models, pipelines, and data workflows. AI agent orchestration specifically manages autonomous agents that make decisions, use tools, and maintain state across interactions.

Do I need multiple AI models for agent orchestration?

No. You can use a single model with different roles, prompts, and contexts for each agent, or combine multiple models for specialized tasks depending on requirements.

How does agent orchestration handle errors when one agent fails?

Systems use retry logic, fallback agents, circuit breakers, and graceful degradation to ensure workflows continue even when individual agents fail.

Can agent orchestration work with existing business processes?

Yes. Existing workflows can be mapped into orchestration patterns. Sequential tasks, approvals, and automation flows can be enhanced with coordinated agent behavior.

What's the minimum team size needed to implement agent orchestration?

Small teams or even a single developer can start using managed tools. Larger enterprise implementations typically require cross-functional teams including engineering and DevOps.

How do you prevent agents from getting stuck in infinite loops?

Safeguards include iteration limits, execution timeouts, progress detection, and human approval checkpoints for critical actions.

Is agent orchestration more expensive than single-agent approaches?

Initially yes, but optimized orchestration can reduce overall costs through task specialization, caching, and parallel execution, improving efficiency over time.

Taking the Next Step

AI agent orchestration represents more than a technical architecture pattern. It's a fundamental shift in how organizations approach complex problems.

Single agents hit capability ceilings fast. Orchestrated agent systems break through those limits by combining specialization with coordination. The research is clear—from arXiv papers on multi-agent systems to enterprise adoption tracked by MIT Sloan Management Review—orchestration is moving from experimental to production.

But complexity matters. Teams that succeed start simple, measure carefully, and scale based on evidence. They choose orchestration patterns that fit actual requirements rather than chasing sophistication.

The platforms exist. The patterns are documented. The challenges are understood.

What remains is execution. Organizations that master agent orchestration will build AI systems that handle the messy, multi-faceted problems that define real business value. Those that don't will struggle to move beyond basic automation.

The opportunity window is open. Early movers gain experience while the field still evolves. But the window won't stay open forever—as orchestration matures, competitive advantage will shift from implementation capability to optimization mastery.

Start exploring orchestration with a concrete use case. Build something small that solves a real problem. Learn what works in practice, not just theory.

The next stage of AI isn't bigger models. It's smarter coordination.