AI Agent Frameworks: Production Guide for 2026

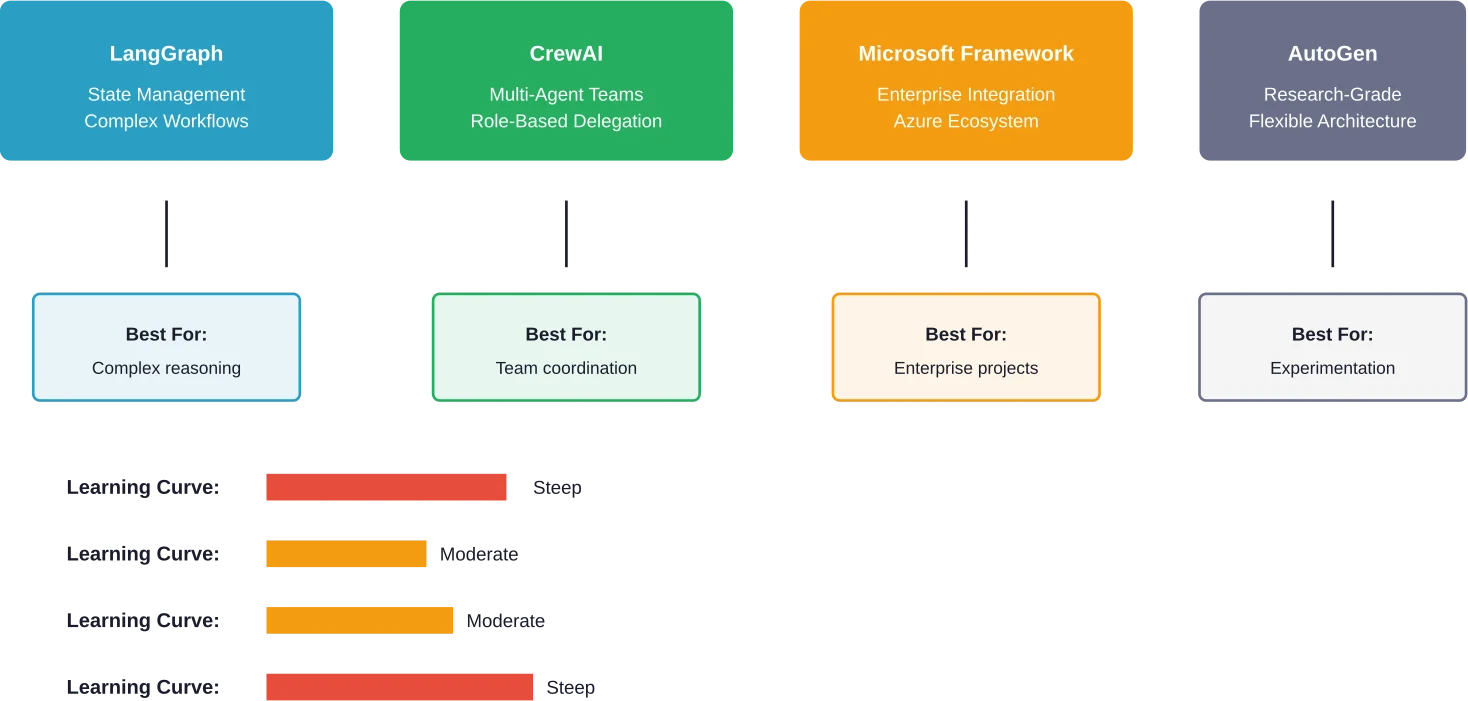

Quick Summary: AI agent frameworks are software platforms that provide standardized components, abstractions, and orchestration mechanisms to simplify building autonomous AI systems. Popular frameworks like LangGraph, CrewAI, AutoGen, and Microsoft Agent Framework offer different strengths for production deployment, from state management to multi-agent collaboration. Choosing the right framework depends on your technical requirements, team expertise, integration needs, and whether you need single-agent or multi-agent capabilities.

The explosion of large language models has triggered a gold rush in AI agent development. But here's the thing—building a demo that works in your Jupyter notebook is dramatically different from shipping an agent that handles real user queries without calling the same API three times in a row.

AI agent frameworks promise to solve this problem. They're the building blocks that help developers create, deploy, and manage autonomous AI systems without reinventing the wheel. According to research from arXiv analyzing AI agent systems, these frameworks combine foundation models with reasoning, planning, memory, and tool use to create practical interfaces between natural-language intent and real-world actions.

The challenge? There are dozens of frameworks now, each claiming to be production-ready. Some live up to the hype. Others don't survive contact with actual users.

What AI Agent Frameworks Actually Do

Think of agent frameworks as software toolkits that provide standardized components for building AI agents. They handle the repetitive infrastructure work so developers can focus on agent behavior and business logic.

These frameworks typically include several core components. There's the orchestration layer that manages conversation flow and decision-making. Memory systems store context across interactions. Tool integration allows agents to call external APIs, databases, or services. Model abstraction lets teams swap between different LLMs without rewriting code.

According to NIST's AI Agent Standards Initiative announced in February 2026, ensuring trusted, interoperable, and secure agentic systems requires standardization across these architectural components. The initiative addresses growing concerns about agent reliability and cross-platform compatibility.

Real-world applications span multiple industries. Financial institutions deploy agents that monitor fraudulent transactions. Supply chain systems use multi-agent frameworks to track inventory and forecast demand. Customer support teams build agents that resolve queries end-to-end.

But not all frameworks handle these scenarios equally well.

The Production-Ready Frameworks

Community discussions consistently highlight a few frameworks that actually survive deployment. Based on developer experiences shared across technical forums, here's what works.

LangGraph: State Management Done Right

LangGraph, built by the LangChain team, treats agent workflows as state machines. This architectural choice makes debugging significantly easier compared to frameworks where agent behavior feels like a black box.

The framework excels at complex, multi-step reasoning tasks. When agents need to backtrack, retry, or branch based on intermediate results, LangGraph's graph-based approach provides explicit control. Developers define nodes (agent actions) and edges (transitions between actions), creating workflows that are both powerful and maintainable.

Integration with the broader LangChain ecosystem means access to hundreds of pre-built tool integrations. The learning curve is steeper than simpler frameworks, but that complexity buys you production reliability.

CrewAI: Multi-Agent Collaboration Simplified

CrewAI focuses specifically on orchestrating teams of specialized agents. Instead of building one mega-agent that tries to do everything, teams create focused agents with specific roles and expertise.

The framework handles inter-agent communication and task delegation automatically. A manager agent can coordinate specialist agents for research, writing, and fact-checking without developers manually wiring up every interaction.

This approach mirrors how human teams actually work. For projects requiring diverse capabilities—content creation pipelines, research workflows, data analysis sequences—CrewAI's abstraction level hits a sweet spot between flexibility and ease of use.

Microsoft Agent Framework: Enterprise Integration

Microsoft's framework, supporting both .NET and Python, targets enterprises already invested in Azure infrastructure. According to the official documentation, the framework provides standardized components for building agents, workflows, and multi-agent systems with deep Azure OpenAI integration.

The selling point here is enterprise readiness. Authentication, logging, monitoring, and deployment pipelines integrate with existing Microsoft tooling. For organizations standardizing on Azure, this framework reduces integration friction significantly.

Microsoft's framework supports multiple model providers—Azure OpenAI, OpenAI, Anthropic, and Ollama—giving teams model flexibility without vendor lock-in.

AutoGen: Research-Grade Multi-Agent Systems

AutoGen, developed by Microsoft Research, pioneered conversational multi-agent architectures. Agents engage in back-and-forth dialogues to solve problems collaboratively.

The framework shines in research and experimentation. Its flexibility allows for novel agent interaction patterns that more opinionated frameworks don't support. Academic papers analyzing agent architectures frequently use AutoGen as a baseline.

But that flexibility comes with complexity. Production deployment requires more infrastructure work compared to frameworks with batteries-included deployment tools.

Choosing the Right Framework for Your Project

Framework selection isn't about picking the "best" option. It's about matching capabilities to requirements.

Start with the agent complexity needed. Single-agent systems handling straightforward tasks don't need heavyweight multi-agent orchestration. A customer support bot answering FAQs works fine with simpler frameworks or even direct LLM API calls with function calling.

Multi-step workflows with branching logic, error recovery, and state persistence benefit from frameworks like LangGraph. When the agent needs to remember what happened three steps ago and adjust its approach accordingly, explicit state management becomes critical.

Team expertise matters more than marketing claims. A framework that requires deep understanding of graph theory won't help if the team hasn't built with those abstractions before. CrewAI's role-based approach maps naturally to how non-technical stakeholders think about agent systems, reducing communication friction.

Integration requirements often dictate the shortlist. Teams already running Azure infrastructure gain immediate value from Microsoft's framework. Organizations using specific observability tools need frameworks with compatible instrumentation hooks.

According to empirical research on agent frameworks, more than 80% of developers report difficulties in identifying the frameworks that best meet their specific development requirements. The ecosystem is evolving rapidly, and documentation quality varies significantly.

|

Framework |

Best Use Case |

Model Support |

Deployment Complexity |

|---|---|---|---|

|

LangGraph |

Complex reasoning workflows |

Any LLM via LangChain |

Moderate |

|

CrewAI |

Multi-agent collaboration |

OpenAI, Anthropic, open models |

Low |

|

Microsoft Agent Framework |

Enterprise Azure projects |

Azure OpenAI, OpenAI, Anthropic, Ollama |

Low (with Azure) |

|

AutoGen |

Research and experimentation |

OpenAI, custom models |

High |

Put AI Agent Frameworks Into Production

Frameworks help structure agents, but production issues usually come from everything around them – integrations, data handling, and how agents connect to existing systems.

OSKI Solutions focuses on implementing AI integrations within real products. They use .NET, Node.js, and Python to connect agent frameworks with CRM, ERP, and other business tools, handling APIs, backend logic, and cloud environments on Azure or AWS. The work is centered on making frameworks usable in day-to-day operations, not just development setups.

If you’re planning to move an AI agent framework into production, it’s worth aligning the implementation with OSKI Solutions early on.

Choose the Right AI Agent Framework

Compare leading frameworks and find the best foundation for building scalable, reliable AI agents tailored to your needs.

OpenAI's Agent Capabilities

OpenAI introduced AgentKit, providing modular tools for building and deploying agents. According to the official documentation, AgentKit includes Agent Builder (a visual canvas with starter templates), deployment tools like ChatKit, and monitoring capabilities.

In July 2025, OpenAI announced ChatGPT agent functionality, enabling the model to handle complex end-to-end tasks using its own computer. The system can manage requests like analyzing calendar events, researching topics, creating presentations, and making bookings—all with user guidance but autonomous execution.

OpenAI's approach differs from traditional frameworks. Rather than providing infrastructure for developers to build custom agents, they're creating agent capabilities directly within ChatGPT. Developers can extend these with custom tools and skills through the API, but the core orchestration happens within OpenAI's platform.

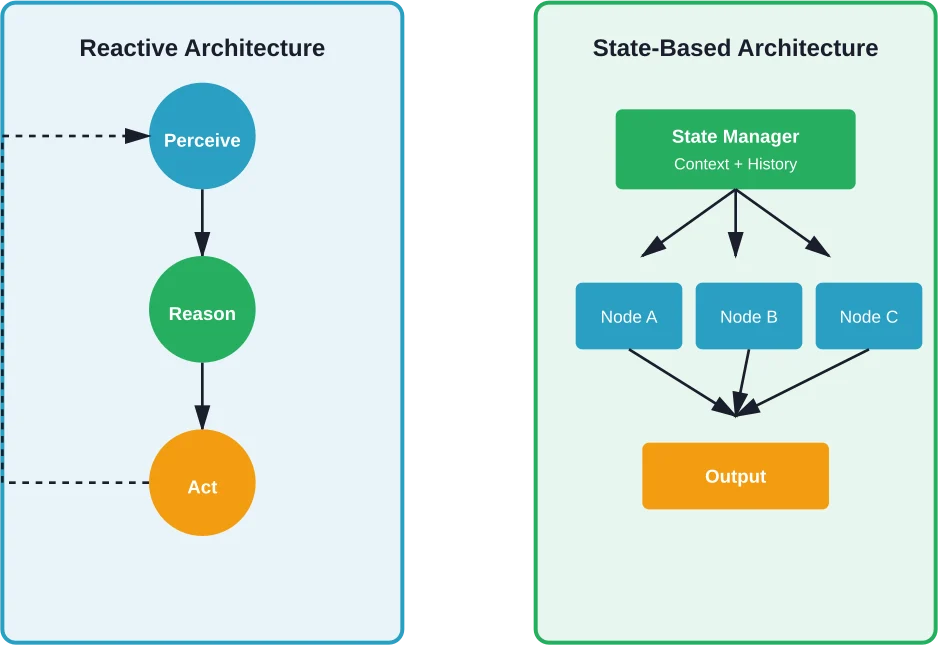

Framework Architecture Patterns

Understanding architectural patterns helps predict how frameworks will behave under production load.

Reactive architectures, like those used in many implementations, follow a perception-reasoning-action loop. The agent observes its environment (user input, tool results), reasons about the next step, and executes an action. This pattern works well for straightforward tasks but can spiral into inefficient loops without proper guardrails.

State-based architectures explicitly model agent state across interactions. LangGraph exemplifies this approach. Every decision point and context variable lives in a managed state graph, making debugging and testing dramatically easier.

Multi-agent architectures distribute work across specialized agents. CrewAI and AutoGen both implement this pattern, though with different communication models. CrewAI uses hierarchical delegation, while AutoGen enables more flexible peer-to-peer agent conversations.

According to arXiv research on agent architecture evaluation methods, understanding component interactions—planners, memory systems, and tool routers—is critical for diagnostic evaluation. Over 66% of studies rely almost exclusively on high-level accuracy or success-rate measures, missing architectural insights that explain failure modes.

Production Deployment Considerations

Getting agents from development to production requires addressing challenges that demos conveniently ignore.

Cost control tops the list. Agents making dozens of LLM calls per user interaction can rack up API costs quickly. Frameworks need built-in mechanisms for limiting call depth, caching repeated queries, and batching operations where possible.

Reliability means handling partial failures gracefully. When an external API times out mid-workflow, does the agent retry intelligently or start from scratch? Does it inform users about the delay or leave them wondering if it's stuck?

Observability tools integrated into the framework make troubleshooting feasible. Logging every LLM call, tool invocation, and state transition provides the audit trail needed to understand why an agent behaved unexpectedly.

According to OpenAI's testing guidance for agent skills, systematic evaluation is critical for improvement. Running evals—automated test suites that score agent behavior against expected outcomes—helps teams iterate without manual testing bottlenecks. OpenAI recommends continuing optimization until reaching quality thresholds above 80% positive feedback or hitting diminishing returns.

Common Framework Limitations

No framework solves every problem. Understanding limitations prevents expensive mistakes.

Learning curves vary dramatically. Frameworks offering maximum flexibility often require significant upfront investment. Teams without graph theory experience will struggle with state-based architectures initially. The tradeoff between power and accessibility is real.

Performance optimization capabilities differ. Some frameworks provide granular control over parallel execution, streaming responses, and resource allocation. Others offer simplicity at the cost of performance tuning options.

Developer discussions analyzed across multiple platforms reveal that different frameworks encounter similar problems during use. Recurring issues include debugging difficulty, integration friction with existing systems, and documentation gaps around advanced features.

Maintainability—how easily developers can update and extend both the framework and agents built upon it—varies significantly. Frameworks with active communities, comprehensive docs, and stable APIs reduce long-term maintenance burden.

The Evaluation Challenge

Measuring agent performance is harder than traditional software metrics suggest.

Task completion rates only tell part of the story. An agent might technically complete a task while taking an inefficient path, making unnecessary API calls, or providing a poor user experience.

Research from arXiv analyzing enterprise agent benchmarks evaluated 18 distinct configurations across multiple models.

Architecture-aware metrics that evaluate component-level behavior provide better diagnostic insight than aggregate scores. Understanding whether failures stem from poor planning, inadequate memory, or incorrect tool selection enables targeted improvements.

According to research on agentic AI system assessment frameworks, evaluation must extend beyond task completion to include aspects like cost optimization and operational reliability—critical dimensions for real-world CloudOps scenarios.

Frequently Asked Questions

What's the difference between single-agent and multi-agent frameworks?

Single-agent frameworks are designed around one autonomous agent that handles tasks independently using reasoning and tools. Multi-agent frameworks coordinate multiple specialized agents that collaborate, delegate work, and communicate with each other. Single-agent systems are often better for focused use cases, while multi-agent systems are better suited for complex workflows that require different capabilities.

Can I switch frameworks after starting development?

Yes, but switching frameworks usually requires significant refactoring. State management, tool integrations, orchestration logic, and agent behavior are often tightly coupled to the chosen framework. Teams can reduce migration effort by separating business logic from framework-specific code and validating framework fit early through proof-of-concept builds.

Which frameworks support the most LLM providers?

Many modern frameworks support multiple LLM providers through abstraction layers. LangGraph benefits from broad model integrations, while other frameworks support providers such as OpenAI, Anthropic, Azure OpenAI, and open-source models. The exact level of support depends on the framework and its current integrations.

How do frameworks handle agent cost control?

Frameworks typically manage costs through execution limits, caching, usage monitoring, retry controls, and model routing. Effective cost control also depends on prompt design, choosing the right model for each task, and monitoring token usage and workflow behavior over time.

Are open-source frameworks production-ready?

Yes, many open-source frameworks are production-ready when implemented correctly. Their success depends on engineering quality, infrastructure, observability, and team expertise rather than licensing alone. Open-source tools offer flexibility and transparency, while commercial platforms may provide managed services and support.

What skills do developers need to build with agent frameworks?

Developers typically need Python or .NET skills, understanding of LLM fundamentals, API integration experience, and familiarity with asynchronous programming. Knowledge of prompt engineering, orchestration patterns, error handling, and observability is also valuable for building reliable production systems.

How does NIST's AI Agent Standards Initiative impact framework development?

NIST's initiative is expected to influence how frameworks approach interoperability, security, and evaluation. As standards mature, framework developers will likely add more compatible interfaces and stronger safety features. This can make it easier for organizations to compare tools and build trusted production systems.

Moving Forward with Agent Frameworks

The agent framework landscape will continue evolving rapidly throughout 2026 and beyond. New frameworks emerge regularly, existing ones add capabilities, and best practices crystallize through production deployments.

Rather than chasing the newest framework, focus on fundamentals. Build with established frameworks that have active communities and proven production track records. Prioritize clear requirements over feature checklists. Invest in observability and testing infrastructure early—debugging production agent failures without proper instrumentation is nearly impossible.

Start small. Single-agent systems handling well-defined tasks provide learning opportunities without overwhelming complexity. Add multi-agent orchestration, advanced memory systems, and sophisticated tool integrations only when requirements demand them.

The frameworks that survive long-term will be those that balance power with usability, offer genuine production reliability, and build strong developer ecosystems. According to academic reviews of agent frameworks, the field is moving toward standardized abstractions that simplify development while maintaining flexibility for specialized use cases.

Ready to build production agents? Choose a framework aligned with your team's skills and project requirements. Start with focused use cases, instrument everything, and iterate based on real user feedback. The frameworks exist—success depends on disciplined implementation.