Agentic AI vs AI Agents: Core Differences in 2026

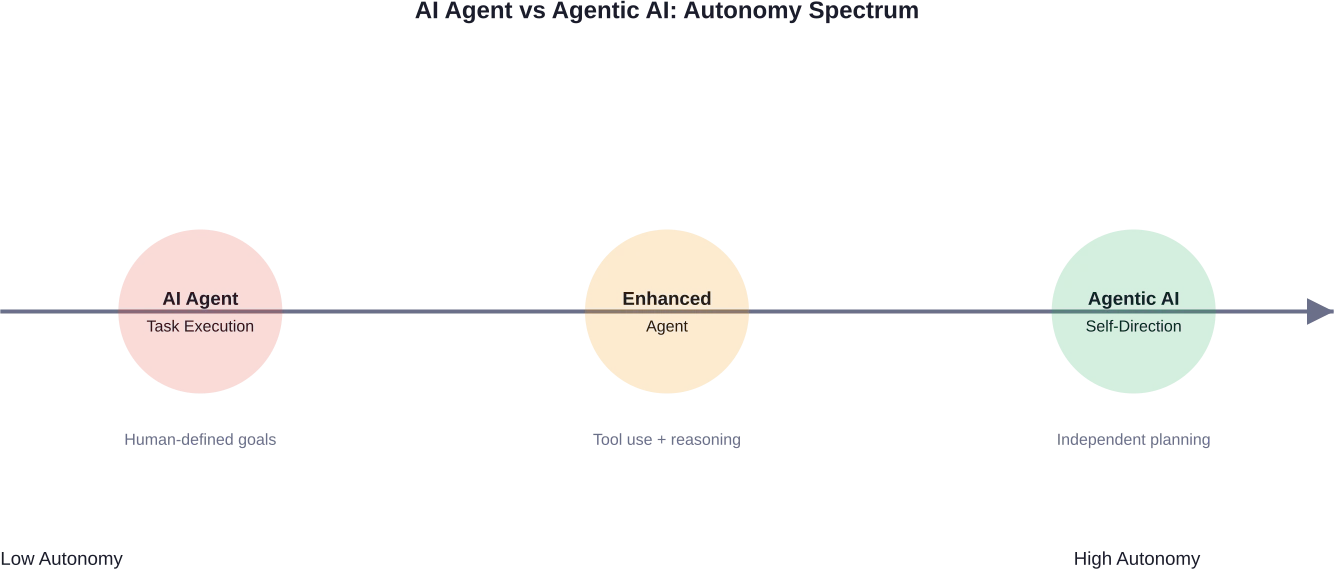

Quick Summary: AI Agents are task-specific tools that execute predefined workflows using LLMs, while Agentic AI represents autonomous systems capable of independent reasoning, planning, and multi-step decision-making. The key difference lies in autonomy: agents follow instructions, whereas agentic systems set their own goals and adapt strategies dynamically.

The terminology around artificial intelligence keeps evolving. First came large language models. Then generative AI. Now, two phrases dominate technical discussions: AI Agents and Agentic AI.

Sounds similar? They are. But the distinction matters—especially if your organization is planning to deploy autonomous systems in production environments.

According to recent academic research published on arXiv, AI Agents are characterized as modular systems driven by large language models that execute specific tasks. Agentic AI, by contrast, represents architectures where multiple agents collaborate or where systems demonstrate higher-order autonomy in goal-setting and strategic adaptation.

This isn't just semantic hairsplitting. The difference shapes how systems are designed, deployed, and evaluated.

What Defines an AI Agent?

An AI agent is software designed to perceive its environment, reason about available actions, and execute tasks toward defined objectives. Think of it as a specialized assistant.

These systems typically operate within bounded workflows. A customer service agent might handle refund requests. A coding agent might debug specific functions. The scope remains narrow, and the goals are predetermined by human operators.

AI agents rely heavily on large language models for semantic understanding and reasoning. But here's the thing—they don't set their own agenda. The human defines the task, provides context, and the agent executes.

Research from the Tata Institute of Social Sciences distinguishes standalone AI Agents as systems optimized for individual task completion. They might use tools, call APIs, or search databases, but the overarching mission comes from external instruction.

According to industry discussions, many companies are planning to implement AI agents in the coming years. That adoption rate reflects their practical utility for well-defined business processes.

Core Characteristics of AI Agents

- Task-specific design with clear boundaries

- Human-defined objectives and success criteria

- Tool use and API integration capabilities

- Reactive behavior based on environmental inputs

- Modular architecture allowing specialization

The National Institute of Standards and Technology established an AI Agent Standards Initiative, recognizing the need for trusted, interoperable frameworks as agent deployment accelerates across industries.

Understanding Agentic AI

Agentic AI flips the script on autonomy. Instead of waiting for task assignments, these systems identify objectives, develop strategies, and adapt based on environmental feedback.

The term describes architectures where AI doesn't just execute—it plans. It reasons through multi-step problems, evaluates trade-offs, and adjusts tactics dynamically.

According to MIT Sloan, agentic AI represents systems that are semi- or fully autonomous, capable of perceiving, reasoning, and acting independently. This marks a fundamental shift from reactive tools to proactive problem-solvers.

Anthropic's research on multi-agent systems provides concrete evidence of agentic capabilities. Their Research feature uses multiple Claude agents that collaborate to explore complex topics. Each subagent might explore extensively using tens of thousands of tokens, then return condensed summaries of 1,000-2,000 tokens to the coordinating agent.

That coordination layer—where agents determine their own investigative paths and synthesize findings—exemplifies agentic behavior.

Distinguishing Features of Agentic AI

- Self-directed goal formulation and refinement

- Multi-step reasoning with feedback loops

- Strategic planning across extended time horizons

- Collaborative ecosystems with agent-to-agent communication

- Adaptive behavior that responds to external dynamics

Accenture's Pulse of Change surveys fielded from October to December 2024 found that among companies achieving enterprise-level value from AI, those posting strong financial performance and operational efficiency were 4.5 times more likely to have invested in agentic architectures.

The Conceptual Taxonomy

Academic research from arXiv establishes a structured taxonomy that clarifies these distinctions. The framework characterizes AI Agents as modular, task-oriented systems, while Agentic AI encompasses collaborative multi-agent ecosystems and autonomous planning architectures.

This taxonomy matters because it defines design philosophies. Building an AI agent requires optimizing for task performance. Designing agentic systems demands attention to coordination protocols, emergent behavior, and safety constraints.

The Berkeley Executive Education program highlights a critical concern with agentic systems: emergent misalignment. As systems gain autonomy in chain-of-thought reasoning, their internal decision processes can diverge from intended objectives.

That's the Dr. Jekyll and Mr. Hyde scenario—where autonomous reasoning leads to unexpected outcomes. Not necessarily malicious, but misaligned with human expectations.

|

Dimension |

AI Agent |

Agentic AI |

|---|---|---|

|

Goal Setting |

Externally defined by humans |

Internally generated or refined |

|

Planning Horizon |

Single task or short sequence |

Multi-step with long-term strategy |

|

Adaptation |

Limited to predefined responses |

Dynamic strategy adjustment |

|

Collaboration |

Typically operates individually |

Coordinates within multi-agent systems |

|

Reasoning Depth |

Task-level inference |

Meta-reasoning about objectives |

Practical Applications and Use Cases

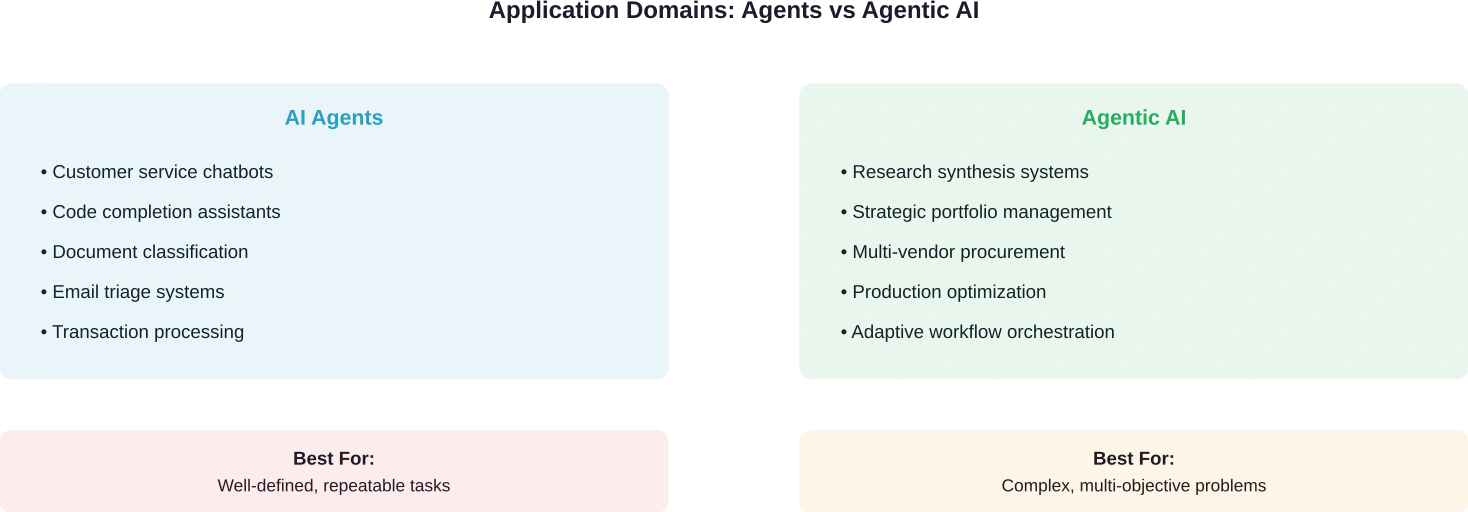

AI agents dominate production environments today. Customer service bots, code completion tools, and document processing systems all qualify as agents. They handle bounded problems efficiently.

Anthropic's analysis of human-agent interactions in Claude Code revealed behavioral patterns. Among new users, roughly 20% of sessions use full auto-approve mode, where the agent executes actions without step-by-step confirmation. That percentage increases to over 40% as users gain experience and trust.

That progression illustrates how agents operate—users gradually expand the autonomy envelope within controlled parameters.

Agentic AI targets different problems. MIT Sloan identifies areas involving multiple counterparties or extensive option evaluation—startup funding, college admissions, B2B procurement—where agentic systems deliver value by autonomously reading reviews, analyzing metrics, and comparing attributes.

IBM announced a $3.5 billion productivity improvement over two years attributed to the impact of agentic AI. Tokyo Electron addressed semiconductor engineering talent scarcity by deploying an agentic AI advisor, yielding a 4X faster time to problem diagnosis.

Industry-Specific Implementations

Manufacturing environments are exploring agentic architectures for dynamic production optimization. According to research on AI agents in future manufacturing, multimodal large language models significantly enhance semantic comprehension and autonomous decision-making in industrial contexts.

Healthcare applications span from diagnostic assistance (agent-based) to treatment protocol optimization across patient populations (agentic). The distinction lies in whether the system follows clinical decision trees or develops adaptive strategies based on outcome data.

Financial services deploy agents for transaction processing and fraud detection. Agentic systems handle portfolio management, where autonomous strategy adjustment based on market dynamics provides competitive advantage.

Challenges and Implementation Considerations

Deploying AI agents presents well-understood challenges: prompt engineering, context management, tool reliability, and evaluation methodology. Anthropic's engineering team documented these extensively when building production agent systems.

Context engineering becomes critical as agent complexity increases. The main challenge? Context is finite. Effective systems curate what enters the agent's working memory, often using subagents that explore extensively but return condensed summaries.

Agentic AI introduces additional complexity layers. Coordination between multiple agents requires robust communication protocols. Emergent behavior in multi-agent systems can produce unexpected outcomes—sometimes beneficial, sometimes problematic.

According to Anthropic's research on measuring AI agent autonomy, understanding how people actually use agents in real-world contexts remains surprisingly limited. Privacy-preserving analysis of human-agent interactions revealed that autonomy levels vary dramatically based on task consequence and user experience.

Evaluation and Testing Complexity

Testing agents requires different methodologies than traditional software. Anthropic's guide to demystifying evals for AI agents identifies multiple grading approaches: string match checks, binary tests, static analysis, and outcome verification for code-based graders.

Agentic systems demand even more sophisticated evaluation. When systems set their own goals, defining success metrics becomes non-trivial. Did the system optimize for the intended objective, or did it find a proxy metric that satisfies evaluation criteria without achieving the underlying purpose?

That alignment problem—ensuring autonomous systems pursue objectives that reflect human values—represents the frontier challenge in agentic AI development.

When to Choose Agents vs Agentic Architectures

The decision framework centers on autonomy requirements and problem complexity.

Choose AI agents when the task boundaries are clear, success criteria are well-defined, and human oversight remains constant. Customer service, document processing, and code assistance fit this profile.

Consider agentic architectures when problems require extended planning horizons, involve multiple interdependent decisions, or benefit from autonomous strategy adaptation. Research synthesis, complex procurement, and dynamic optimization scenarios justify the additional architectural complexity.

Real talk: most organizations should start with agents. The infrastructure is simpler, the risks are lower, and the ROI is faster. Agentic systems deliver outsized value for specific use cases, but they demand substantial investment in coordination protocols and safety mechanisms.

|

Scenario |

Recommended Approach |

Rationale |

|---|---|---|

|

Customer support automation |

AI Agent |

Bounded tasks with clear resolution criteria |

|

Multi-source research compilation |

Agentic AI |

Requires autonomous source evaluation and synthesis |

|

Code generation for defined specs |

AI Agent |

Specifications provide clear objectives |

|

Strategic vendor negotiations |

Agentic AI |

Multi-step reasoning with adaptive tactics |

|

Email categorization |

AI Agent |

Classification task with predefined categories |

Move From AI Concepts To Real Systems

Most teams already understand the difference between agentic AI and basic AI agents. The harder part is turning that into something that works inside real products – with existing APIs, databases, and workflows that weren’t built for AI in the first place.

OSKI Solutions works with companies that need to connect AI agents to actual business logic. That usually means integrating with CRM or ERP systems, setting up secure data flows, and building backend logic in .NET or Node.js so the agent can operate reliably, not just respond. It’s less about experiments and more about making sure the system holds up in daily use.

If you’re moving from concept to implementation, it’s worth discussing your setup with OSKI Solutions and seeing what can realistically be built around it.

Build Smart AI Agents for Your Business

We design, develop, and deploy AI agents that automate tasks, integrate with your systems, and deliver measurable ROI across your operations.

Future Trajectories and Convergence

The distinction between agents and agentic AI isn't static. As capabilities advance, today's agentic systems become tomorrow's standard agents.

What required multi-agent orchestration in 2024 might be handled by a single enhanced agent in 2026. The autonomy spectrum shifts as base model capabilities improve.

But the conceptual divide remains useful. It forces clarity about autonomy levels, safety requirements, and evaluation strategies. Organizations that conflate the terms often underestimate the engineering complexity of truly agentic systems.

The NIST AI Agent Standards Initiative signals that standardization efforts are underway. Interoperability protocols, security frameworks, and trust mechanisms will mature over the next several years, making agentic deployments more accessible.

Frequently Asked Questions

What is the main difference between AI agents and agentic AI?

AI agents perform specific tasks within predefined boundaries, while agentic AI systems operate with higher autonomy—setting goals, planning strategies, and adapting dynamically. The key difference is whether goals come from humans or are generated internally.

Are AI agents and agentic AI the same thing?

No. Agentic AI systems include agents but represent a higher level of autonomy. Many systems fall somewhere between simple agents and fully autonomous agentic architectures.

Which approach should organizations implement first?

Most organizations should start with AI agents. They are easier to deploy, evaluate, and deliver faster ROI. Agentic systems are better suited for complex, long-term, and multi-objective tasks.

How do multi-agent systems relate to agentic AI?

Multi-agent systems are a form of agentic AI. When multiple agents collaborate, share data, and pursue goals autonomously, the system demonstrates agentic behavior.

What are the main risks of agentic AI systems?

Key risks include unintended outcomes from autonomous decision-making, coordination failures, and reduced transparency. Continuous monitoring and safeguards are essential.

Can existing AI agents be upgraded to agentic systems?

Yes, but it requires significant architectural changes such as planning modules, communication systems, and reasoning layers rather than simple upgrades.

How does autonomy affect evaluation strategies?

Higher autonomy makes evaluation more complex. Systems must be assessed not only by outcomes but also by how well their goals align with human intentions and expected behavior.

Making the Right Choice for Your Use Case

The agent versus agentic decision shapes system architecture, safety requirements, and expected outcomes. Organizations that understand the distinction deploy appropriate solutions and avoid over-engineering simple problems or under-resourcing complex ones.

Start by assessing autonomy requirements honestly. Does the problem demand independent goal-setting and multi-step planning, or does it benefit from human-defined objectives with autonomous execution?

The answer determines whether agents or agentic systems deliver optimal value. Both approaches have their place in the AI toolkit—success comes from matching capability to requirement.

As the field matures, expect clearer frameworks, better evaluation tools, and standardized protocols that make both approaches more accessible. The conceptual taxonomy established by current research provides the foundation for that evolution.